The 10 Coolest Big Data Tools Of 2022 (So Far)

Data is an increasingly valuable asset for businesses and a critical component of many digital transformation and business automation initiatives. Here’s a look at 10 cool tools in the big data management and analytics space that made their debut in the first half of the year.

Tackling The Big Challenges Of Big Data

In 2025, the total amount of digital data and information created, captured, copied and consumed worldwide will reach 181 zettabytes, up from 79 zettabytes in 2021, according to an estimate from market researcher Statista.

Business and organizations are struggling to manage, analyze and otherwise use it for competitive advantage. Global spending for big data products and services, not surprisingly, is expected to explode from $162.6 billion last year to $273.4 billion in 2026, according to a MarketsandMarkets report. And that means opportunities for solution providers and strategic service providers.

The first half of 2022 has seen the debut of a number of cool new big data tools, both from established companies and startups, that are setting the pace in leading-edge data management and data analytics technologies.

Here’s a look at 10 that have caught our attention.

Read the latest entry: The 10 Hottest Big Data Tools of 2022

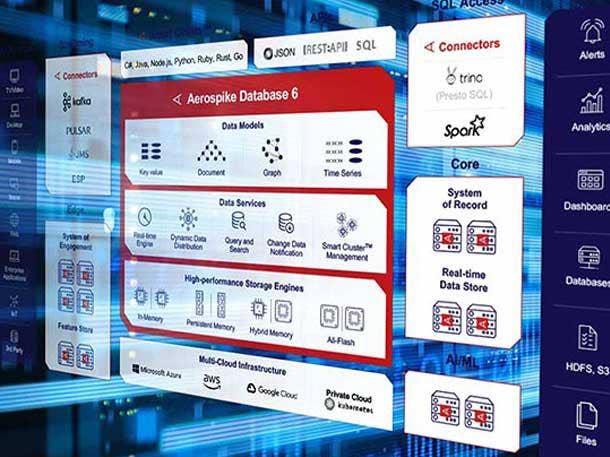

Aerospike 6.0 and Aerospike SQL Powered by Starburst

The Aerospike NoSQL database is a distributed, in-memory data system that can process billions of transactions in real-time using massive parallelism and a hybrid memory model. The database, which is the core engine of the Aerospike Real-Time Data Platform, is targeted for multi-cloud, large-scale JSON and SQL use cases.

Aerospike Database 6.0, unveiled in April, provides native support for JSON document models at any scale, according to the company. The new release adds enhanced support for Java programming models, including JSONPath query support to store, search and better complex data sets and workloads.

With the new capabilities the database can support large-scale data models in instances across an enterprise and makes it possible to add time series, graph and additional data models in future releases.

Aerospike SQL Powered by Starburst can run massively parallel, complex SQL queries on petabyte-scale data stored in the Aerospike Real-Time Data Platform. The new solution integrates the Aerospike system with Starburst’s SQL-based MPP query engine. Partners and resellers can sell the two technologies as a single SKU.

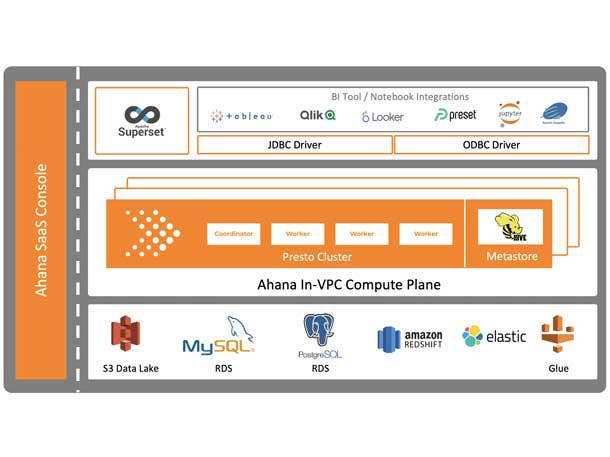

Ahana Cloud for Presto Community Edition

Ahana develops Ahana Cloud for Presto, a data analytics managed service for querying data stored in AWS S3-based data lakehouses. (Presto is an open-source, high-performance, distributed SQL query engine.)

In June, the company debuted Ahana Cloud for Presto Community Edition, a free version of the company’s service that the company says makes it easier for businesses and organizations to get started with a data lakehouse system using open-source technology.

The open-source edition provides many of the same components of Ahana’s commercial offering – minus some of its enterprise capabilities – to simplify the implementation of a Presto-based data lakehouse system around data stored in AWS S3.

Capabilities in the new offering include distributed Presto cluster provisioning and tuned out-of-the-box configurations for multiple data sources such as the Hive Metastore for Amazon S3, Amazon OpenSearch, Amazon RDS for MySQL, Amazon RDS for PostgreSQL and Amazon Redshift.

Alteryx Analytics Cloud

Automated analytics platform developer Alteryx launched Alteryx Analytics Cloud in March, offering a cloud-based analytics automation platform that the company said brings analytical insights to a broad swath of users throughout a business organization.

The cloud suite, which information workers can access using a browser, combines Alteryx Designer Cloud, Alteryx Machine Learning, Alteryx Auto Insights and the Trifacta Data Engineering Cloud into one unified solution. (Alteryx acquired Trifacta in February 2022.)

The new cloud service is based on the company’s flagship Alteryx APA (Analytic Process Automation) Platform that unites data analytics, data science and process automation in a single system with low-code/no-code capabilities.

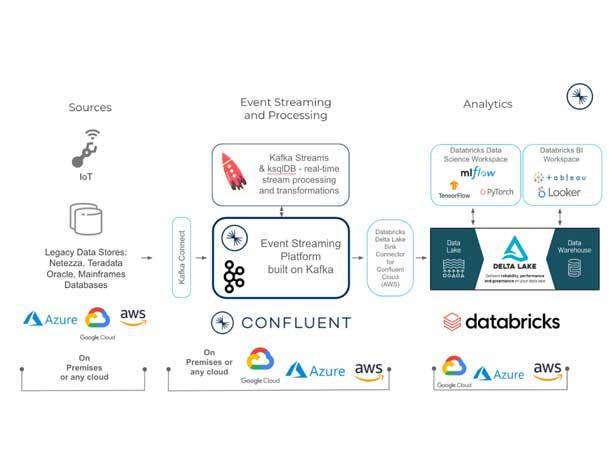

Databricks Vertical Industry Applications

Through the first half of 2022, Databricks, a leading data lakehouse platform developer, launched a series of application packages targeted toward specific vertical industries that tap into the functionality of the Databricks Lakehouse Platform.

The new software included the Lakehouse for Financial Services, the Lakehouse for Media and Entertainment, the Lakehouse for Healthcare and Life Sciences, and the Lakehouse for Retail and Consumer Goods.

The Lakehouse for Financial Services, for example, provides customers in the banking, insurance and capital markets sectors with business intelligence, real-time analytics and AI capabilities. It includes data solutions and use-case accelerators tailored for industry-specific applications such as compliance and regulatory reporting, risk management, fraud and open banking.

The package for healthcare and life sciences addresses challenges in such data-intensive tasks as pharmaceutical development and disease risk prediction using tailored data, AI and analytics accelerators.

Through the Databricks Brickbuilder Solutions program, launched in April, the new software packages also create opportunities for channel partners working within specific vertical industries.

Dremio Cloud, Sonar And Arctic

The Dremio Cloud data lakehouse went live in March, providing a way for data analysts, data engineers and data scientists to tap into huge volumes of data for business analytics, machine learning and other tasks. The cloud-native service is based on the Dremio SQL Data Lake Platform, which includes a query acceleration engine and semantic layer, and works directly with cloud storage systems.

The Dremio Cloud lakehouse is available in a commercial enterprise edition that provides advanced security controls, including custom roles and enterprise identity providers, and enterprise support options. The free standard edition of the service is also offered.

At the same time as the Dremio Cloud launch Dremio debuted Dremio Sonar, a new release of the company’s SQL query engine that powers the cloud lakehouse system. The company also offered a preview of Dremio Arctic, a new metadata and data management service that will work with Dremio Cloud when it’s generally available.

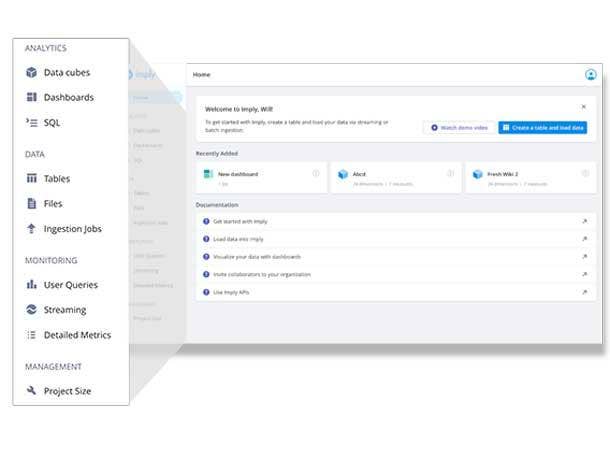

Imply Polaris

In March, Imply, a data analytics startup, launched Polaris, a fully managed database cloud database service targeting developers who need to build and deploy high-performance analytical applications.

Polaris is based on Apache Druid, the open-source analytics database that was developed by Imply’s founders. On top of the core Druid functionality Polaris offers additional “intelligent data operations” functionality including built-in performance monitoring, embedded security, automated configuration and tuning parameters, and a data visualization engine that’s integrated with the database user interface.

Polaris, running on the Amazon Web Services cloud, also includes a built-in, push-based data streaming service that utilizes the Confluent Cloud real time data/event streaming platform on the back end.

Imply is also developing a multi-stage query engine, also based on the Druid architecture, for the new database service. Both Polaris and the query engine are part of Imply’s Project Shapeshift, an initiative to develop products and services to help developers build high-performance analytical apps.

Precisely Data Integrity Suite

Unveiled in June, the Precisely Data Integrity Suite is made up of seven interoperable SaaS modules that can be deployed individually or combined to help businesses and organizations step up their DataOps game.

The suite includes the new Data Observability software that the company says “proactively and intelligently” detects and surfaces data anomalies before they cause disruptions in operational or analytical processes.

The suite also incorporates existing Precisely tools including Data Integration, Data Governance and Data Quality, along with the company’s Geo Addressing, Spatial Analytics and Data Enrichment software.

Underpinning the entire suite is the Data Integrity Foundation, which includes a common data catalog that enables each module to build on metadata previously gathered by other modules.

Snowflake Unistore

At the Snowflake Summit 2022 conference in June data cloud service provider Snowflake debuted Unistore, a new service that processes transactional and analytical data workloads together on a single platform. Unistore, according to Snowflake, simplifies and streamlines the development of transactional applications and provides consistent data governance capabilities, high performance and near-unlimited scale.

While transactional and analytical data are traditionally maintained separately, Unistore expands Snowflake’s data cloud to include transactional use cases. At the core of Unistore is Hybrid Tables, new technology that includes single-row operations and allows users to build transactional applications directly on Snowflake and quickly perform analytics on transactional data.

At the conference, Snowflake also debuted the Native Application Framework that customer and partner developers can use to build data-intensive applications, monetize them through the Snowflake Marketplace and run them directly within their Snowflake instances, reducing the need to move data.

Unistore and the Native Application Framework are key products for Snowflake’s plans to expand beyond its data management and data analytics roots into the realm of operational applications.

Tableau Cloud

Tableau Cloud, a cloud-based version of Tableau’s popular data analytics and visualization software, is the next generation of what was previously known as Tableau Online.

Tableau Cloud, launched in May, is connected to the Salesforce Customer 360 system and underlying Salesforce Customer Data Platform, making it possible to analyze customer data – including sales data, service case data and data from marketing campaigns – and then use Salesforce Flow workflow automation applications to act on the analytical results.

Tableau also added a number of new capabilities to the core Tableau platform that are designed to bring data analytics to a wider audience. That includes an AI-based augmented-analytics feature that adds automated, plain-language explanations to Tableau dashboards at scale.

Tableau has also offered previews of Model Builder, powered by Salesforce’s Einstein Discovery AI and machine learning technology, for building predictive analytical models.

Tamr Enrich

Tamr, whose co-founders include big data entrepreneurs Andy Palmer and Michael Stonebraker, develops leading-edge data mastering and unification software.

Tamr Enrich, introduced in May, is a set of enrichment services built natively into the data mastering process. Using Tamr’s machine learning technology, Tamr Enrich curates and actively manages external datasets and services, allowing data managers to embed trusted, high-quality external data insights to their data mastering pipelines for richer business, according to the company.

Using Tamr Enrich customers can simplify data enrichment tasks, improve match rates, eliminate broken data, standardize data values, better automate data processes and identify data attributes that create new uses for existing data.

The company also says that using Tamr Enrich eliminates the need to hire an outside vendor to update data.