Nvidia Touts H100’s Performance Ahead Of Release

Nvidia said new benchmark test results show that the forthcoming H100 GPU, aka Hopper, raises the bar in per-accelerator performance when it comes to AI performance

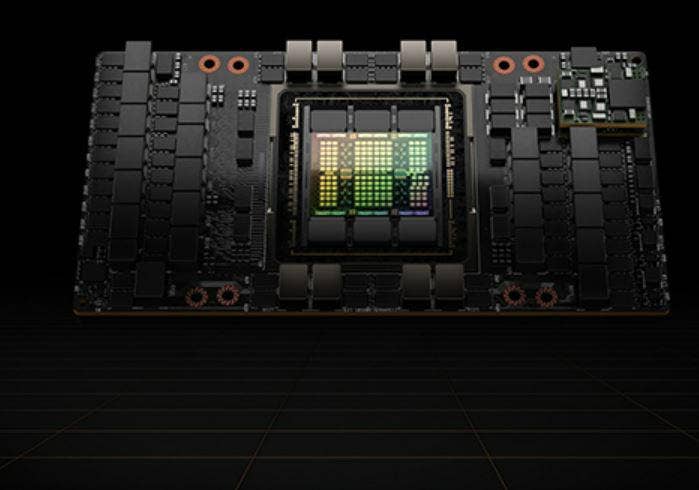

Six months after Nvidia unveiled an accelerated computing platform, code-named Hopper after the pioneering computer scientist Grace Hopper, the Santa Clara, Calif.-based company released more details Thursday about the H100 Tensor Core GPU and its artificial intelligence (AI) capabilities.

In MLPerf industry-standard AI benchmarks, which test machine-learning models, software and hardware, the H100 delivered up to 4.5 times more performance than previous-generation Nvidia GPUs, setting world records in AI inference performance, Nvidia said.

“[We’re] very proud to bring these results to you, delivering up to 4.5x performance versus the previous generation,” said David Salvator, director of AI inference benchmarking and cloud at Nvidia, during a press briefing. “[It’s a] great accomplishment by our engineering team and by our architects, really showing off what H100 is capable of.”

The H100 GPU runs on Nvidia’s new Hopper architecture, the successor to its Ampere architecture.

“The H100 and platforms based on the H100 are designed to tackle the most advanced models, the most challenging AI problems,” Salvator said.

[Related: Nvidia GTC: Hopper GPUs, Grace CPUs, Software Debut With AI Focus ]

The MLPerf benchmark results represent the first public demonstration of Nvidia’s H100 GPUs, the company said, adding that while this round benchmarked performance in AI inference, the H100 will in the future be measured in MLPerf benchmarks for AI training.

The MLPerf benchmark software covers multiple AI workloads, including computer vision, natural language processing, recommendation systems and speech recognition, Nvidia said. The MLPerf software was developed by a consortium of AI companies, research labs and academia with a mission to provide unbiased evaluation and testing tools, Nvidia said. Salvator said the H100 will be available to Nvidia’s channel partners before the end of the year.

When asked to compare H100 to Intel’s Habana Gaudi2, the deep learning processors that Intel insists have outperformed Nvidia’s A100, Salvator said he was uncertain.

“In terms of any sort of horse race prediction about how much faster … H100 will be versus Gaudi2, I don’t have the data and even if I did, I can’t comment on it yet,” he said. “All I can say is stay tuned. There will be results on that this fall, and we look forward to coming back and speaking with you about those results here in a few months.”

Brian Venturo, chief technology officer at public cloud provider CoreWeave, said the privately-held New York-based provider of GPU-accelerated compute solutions for the computer-generated imagery, machine learning and blockchain sectors expects H100 to quickly become a significant portion of its AI/ML revenue mix.

“We believe the demand for H100 will drive more than 50 percent of our total cloud revenue for 2023,” he said.

Worth Davis, senior vice president at solution provider Calian IT & Cyber Solutions, Ottawa, Ontario, said the availability of H100 is a crucial moment for the technology.

“We’re looking forward to taking these solutions to market when they’re in a quantity that we can deliver to our enterprise accounts” he said. “Nvidia has been a great partner in innovation and an innovative leader in the industry.”

J.J Kardwell, CEO for Constant, a West Palm Beach, Fla.-based cloud infrastructure provider, said there has been a lot of anticipation among partners for H100.

“Nvidia has been clear innovation leader in the market, and the most watched part of the GPU sector,” he said. “They are essentially the top of the line for computational and high-performance computing, machine learning and AI-oriented offerings.”