The 10 Coolest Machine Learning Tools Of 2021 (So Far)

Developing and deploying machine learning models can be a complex chore. Here are 10 cool machine learning software tools that have caught our attention this year.

A Learning Experience

As businesses and organizations wrestle with ever-increasing volumes of data, AI, machine learning and deep learning have become key technologies for automating business intelligence tasks and taking data analytics to the next level of predictive analytics.

Developing, deploying and maintaining the machine learning models that make this possible can be a complex, time-consuming process. But a new generation of machine learning software and tools is helping simplify and accelerate machine learning tasks.

Here’s a look at 10 cool machine learning tools that have caught our attention at the mid-point of 2021.

For more of the biggest startups, products and news stories of 2021 so far, click here.

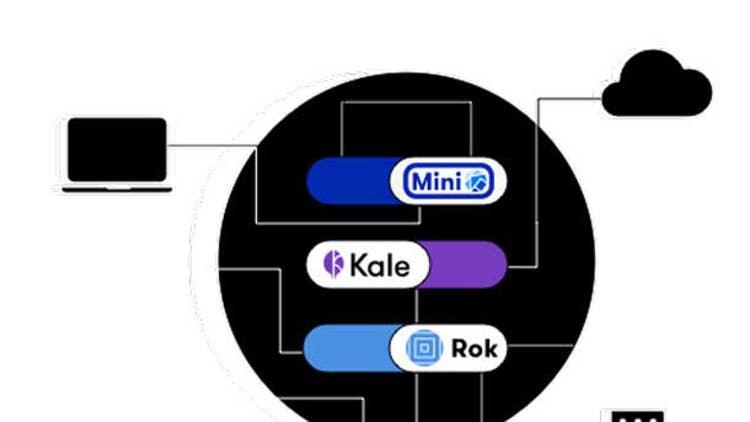

Arrikto Enterprise Kubeflow

Arrikto says the goal of its technology is to apply the same DevOps principles used for software development and deployment to manage data across the machine learning lifecycle – what the company calls “treating data as code.”

Enterprise Kubeflow is Arrikto’s machine learning operations platform, which the company says “simplifies, accelerates and secures” the entire machine learning model development lifecycle through production, enabling MLOps teams to accelerate bringing machine learning models to market 30 times faster than traditional ML platforms.

Enterprise Kubeflow provides automated workflows, reproducible pipelines, secure access to data, and consistent deployment from desktop to cloud.

Big Squid Kraken

Big Squid’s Krackn AutoML is an automated machine learning platform for building and deploying machine learning models for business analytics – including within existing analytics stacks – without the need to write code.

Kraken’s no-code capabilities simplifies the adoption of machine learning and AI, helping data analysts, data scientists, data engineers and business users collaborate on machine learning and predictive analytics projects.

DotData Py Lite

DotData, a developer of AI automation and operationalization tools, offers DotData Py Lite, a containerized AI automation system that enables data scientists to quickly deploy the DotData system on their desktop computers and execute machine learning proof of concepts.

Py Lite, launched in May, is designed for Python data scientists and provides automated feature engineering and automated machine learning in a portable environment.

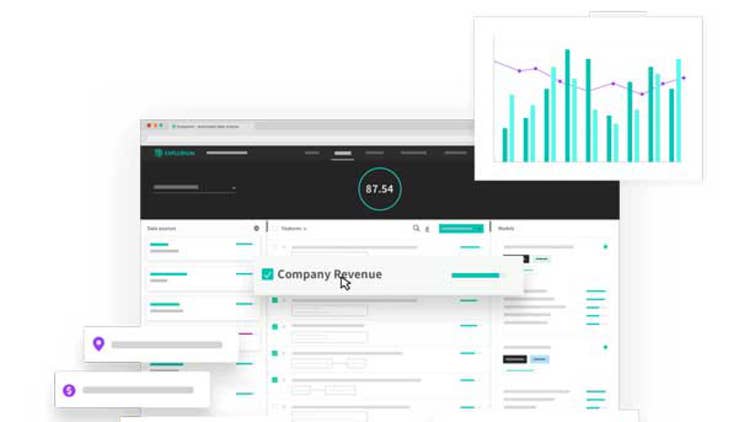

Explorium External Data Platform

Explorium’s External Data platform automatically discovers thousands of relevant data signals and uses them to improve machine learning model performance and the predictive analytics they drive.

At the platform’s core is the AutoML engine that powers the system’s automated data discovery, automated feature generation and selection, and model building and deployment capabilities.

Iterative.ai DVC Studio

In June machine learning operations (MLOps) startup Iterative.ai launched DVC Studio, a visualized user interface based on the Data Version Control (DVC) and Continuous Machine Learning (CML) open-source projects. Iterative.ai is the company behind the development of those projects and DVC Studio is its first commercial product.

DVC studio simplifies ML model development and enhances collaboration by extending traditional software tools like Git and CI/CD (continuous integration and continuous delivery) to meet the needs of ML researchers, ML engineers and data scientists.

Neurothink

Neurothink, which just emerged from stealth in May, offers its Neurothink machine learning platform as an alternative to building models on public cloud service platforms. The company says its goal is to bring ML capabilities to a wider audience with less complexity and greatly improved API security in machine learning workflows.

The Neurothink system, based on high-performance GPU and CPU servers, provides an end-to-end environment and tools for building, training and deploying machine learning models.

OctoML Octomizer

The OctoML Octomizer acceleration platform is used by engineering teams to deploy machine learning models and algorithms on any hardware system, cloud platform or edge device.

Octomizer automatically optimizes and benchmarks machine learning model performance, helping to close the gap between building ML models and putting them into production, according to the company.

Octomizer is a commercial version of Apache TVM, an automated deep learning model optimization and compilation stack that was developed by OctoML’s founders. Octomizer has been available in early access mode since December 2020.

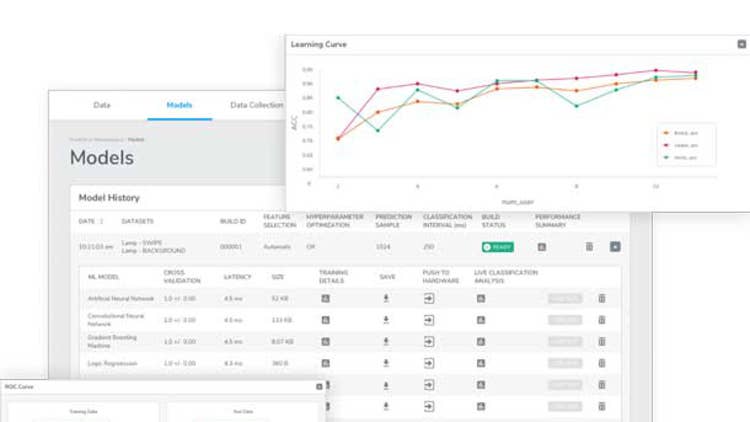

Qeexo AutoML

The Qeexo AutoML platform is focused on automating end-to-end machine learning for edge computing devices, automatically building what the company calls “tinyML” machine learning models.

Qeexo is particularly targeted at Internet of Things and Industrial IoT applications, developing ML models for embedded sensors for anomaly detection, predictive maintenance and other tasks.

Spell DLOps

Spell.ml develops a machine learning platform for deep learning operations (DLOps) that the company says goes beyond traditional machine learning with its capabilities for preparing, training, deploying and managing the full lifecycle of machine learning and deep learning models. Spell.ml says its cloud-agnostic platform can help reduce the costs of deep learning model development.

Deep learning is a segment of the machine learning world that incorporates complex learning models that rely on AI-based neural networks and is often used for complex tasks such as image recognition and natural language processing. Deep learning models are compute-intensive and often require high-performance systems running on GPUs and next-generation AI processors.

Tecton

Startup Tecton has been attracting a lot of attention with its enterprise feature store technology for machine learning. The company, which exited stealth in April 2020, was founded by the developers who created Uber’s Michelangelo machine learning platform.

Feature stores are a critical component of the machine learning stack. They are used to build and serve data to production machine learning systems – what the company says is the hardest part of operationalizing machine learning.

Delivered as a fully managed cloud service, the Tecton feature store manages the complete lifecycle of machine learning features, allowing ML teams to build features that combine batch, streaming and real-time data.

Tecton says its system orchestrates feature transformations to continuously transform new data into fresh feature values. Features can be served instantly for training and online inference with monitoring of operational metrics. And ML teams can use Tecton to search and discover existing features to maximize re-use across models.