The 10 Coolest New Big Data Technologies And Tools Of 2018

As the volume of data that businesses try to collect, manage and analyze continues to explode, spending for big data management and business analytics technologies is expected to reach $260 billion by 2022. Here are 10 big data products that caught our attention in 2018.

Managing The Data Tsunami

Ever-increasing volumes of data, new data-generation sources like Internet of Things networks, and the growing use of artificial intelligence and machine learning to manage and analyze all that data are just some of the drivers behind the continuing evolution of big data technology.

Spending on big data and business analytics solutions is expected to hit $166 billion this year, up 11.7 percent from 2017, and continue to grow at a CAGR of 11.9 percent to $260 billion in 2022.

That growth is fueling a continuous stream of startups in the big data technology arena. And it's also pushing more established players to maintain a rapid pace of developing and delivering new and updated big data products.

Here are 10 big data products that debuted in 2018 that caught our attention.

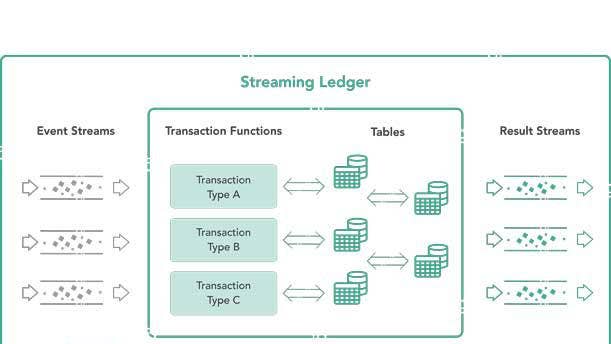

Data Artisans Streaming Ledger

Data Artisans' Streaming Ledger software makes it possible to process serializable ACID transactions using streaming data. Until now it has been difficult to apply ACID (atomicity, consistency, isolation and durability) standards, which guarantee data integrity in distributed transactions, to streaming data processing. Streaming Ledger, part of the River Edition of the Data Artisans platform, makes possible serializable transactions across multiple tables and rows and data streams.

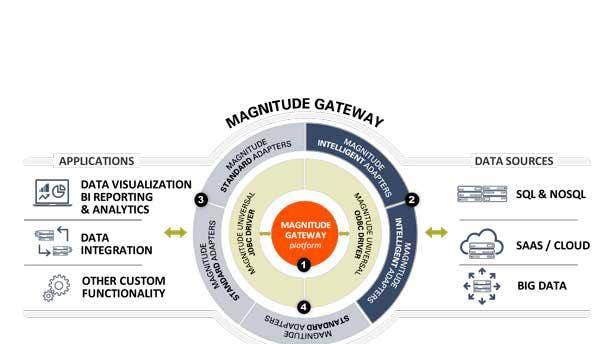

Magnitude Software Magnitude Gateway

Accessing data scattered across multiple locations for operational and analytical purposes is one of the biggest challenges with big data.

Magnitude Software's Magnitude Gateway is a universal data connectivity platform that provides immediate access to operational and analytical data wherever it resides. The software supports up to nearly 100 data sources through the use of universal drivers that connect to relational databases, big data systems, Software-as-a-Service applications and NoSQL databases.

The system makes it possible for business users to access multiple data sources using Magnitude Gateway's self-service capabilities. It also assists ISVs that develop business analytics and data-source integration software to get to market faster by providing pre-built data connectivity.

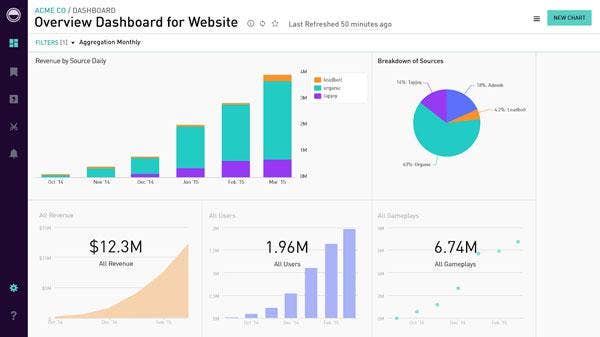

Periscope Data Engine

Periscope Data, which has enjoyed rapidly growing sales of its flagship analytics platform, launched the Data Engine by Periscope Data in November in a move that expands the company's technology portfolio into the realm of data ingestion and processing.

Collecting, transforming and formatting data prior to using it for business analysis tasks can be complex and time consuming. Periscope Data said the new Data Engine bypasses the most challenging steps in data ETL (extract, transform and load) processes, helping data teams achieve faster data ingestion and query performance for any kind of workload, regardless of concurrency, data volume or query complexity.

Periscope Data said the new technology is the first phase of an extensive next-generation analytics warehouse integration system that the company is building out. Data Engine provides users with a way to ingest and process data on Snowflake Computing or AWS Redshift cloud systems. In coming months the company will add a series of data storage and processing options to the Data Engine system.

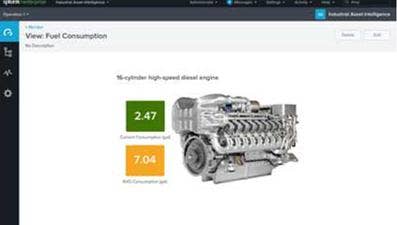

Splunk For Industrial IoT

In April Splunk, a developer of software for managing and analyzing machine data, took the wraps off of Splunk for Industrial IoT, the company's first product specifically for Internet of Things data collection and analysis.

Splunk for Industrial IoT targets applications in the industrial IoT space, including manufacturing, transportation, oil and gas, and energy/utilities.

Splunk for Industrial IoT is designed to bridge the gap between operational technology and traditional IT, including business analytics. The software provides capabilities for collecting, monitoring and analyzing industrial IoT machine data – in real time – generated by industrial controllers, sensors and operational applications.

SQream DB v3.0

SQream launched a new release of its SQream DB GPU-accelerated data warehouse system with double the data load speeds of earlier versions and query speeds up to 15 times faster. The SQream database is designed to process and analyze huge datasets, supporting terabytes and even petabytes of data.

Other enhancements in the 3.0 edition include dynamic workload management for resource workflow prioritization and a highly optimized Spark connector. SQream now offers the database as a Docker container image for easier deployment and upgrades.

Tableau Prep

Recent studies have shown that data analysts spend as much as 80 percent of their time preparing data and only 20 percent analyzing it. Data analytics software developer Tableau Software has sought to reduce data preparation times with Tableau Prep, a data preparation product that makes it easier for everyday workers to combine, shape and clean data for data analysis.

The software, which emphasizes data visualization functionality, includes features that automate complex data preparation tasks such as joins, pivots and aggregations. The new product also provides smart algorithms such as "fuzzy clustering" that automate repetitive tasks such as grouping by pronunciation or cleaning by punctuation.

Tableau Prep is integrated with Tableau's data analysis workflow and is offered as part of the company's Creator subscription offerings. Current users of Tableau Desktop can use Tableau Prep at no charge for two years.

Teradata

In October Teradata, one of the most established companies in the data warehouse and business analytics space, announced the general availability of Teradata Vantage, the company's next-generation analytics platform.

Vantage is a "pervasive data intelligence" platform that includes the Teradata SQL Engine, with the Teradata Database at its core, that can process a range of descriptive, predictive and prescriptive analytics tasks that require integrated data.

Vantage also includes a machine learning engine for developing machine learning functions and a graph engine for processing graph analysis workloads. And Teradata's recently introduced 4D Analytics, which can handle location, time series and temporal data from Internet of Things networks, is also a key component.

TimescaleDB 1.0

TimescaleDB is an open-source, time-series database that's capable of handling huge volumes of machine-generated data, ingesting millions of data points per second, scaling up to tens of terabytes of data and tables with hundreds of billions of rows, and processing complex queries faster than other time-series databases.

The database natively supports full SQL and is packaged as a Postgres extension, allowing it to work with Postgres tooling and other elements of the Postgres ecosystem. An earlier version of the database released in April 2017 has surpassed 1 million downloads.

Yellowbrick Data

Yellowbrick Data, founded in 2014, emerged from stealth this year with its all-flash analytics and data warehouse appliance that the startup says is magnitudes smaller and faster than current data warehouse systems.

Yellowbrick Data developed a system architecture that's based on flash memory hardware and software developed to handle native flash memory queries. The appliance includes integrated CPU, storage and networking with data moving directly from flash memory to the CPU. The system's modular design can scale up to handle petabytes of data by adding analytic nodes.

The system includes an analytic database designed for flash memory, able to handle high-volume data ingestion and processing, and capable of running mixed workloads of ad hoc queries, large batch queries, reporting, ETL (extract, transform and load) processes and ODBC inserts.

The company says its system operates 140 times faster than conventional data warehouse systems for such tasks as retail and advertising analytics, security analysis and fraud detection, financial trading analysis, electronic health records processing and other applications.

Yellowfin Signals and Yellowfin Stories

Yellowfin, a Melbourne, Australia-based business intelligence software developer, debuted Signals and Stories in October, two analytical applications that help business users more quickly discover, understand and act on business opportunities.

The Yellowfin Signals analytical engine provides automated data analysis, analyzing time slices of live and continuous business data to identify critical changes in information, such as sudden spikes or changes in trends. Continuous time-series detection of trends and anomalies helps users recognize critical issues in real-time and understand what's driving the changes.

Yellowfin Stories is designed to help business users better understand analytical results, creating "data stories" that put information in context for faster, better-informed decision making. By embedding live reports from multiple BI dashboards, according to the company, Stories creates "narratives" with consistent, common understanding within an organization, improving knowledge sharing and collaboration.