10 Cool Cloud AI And ML Services You Need To Know About

CRN takes a look at some cool cloud AI and ML services from the top cloud computing vendors, startups and other providers.

Google Cloud’s machine learning-powered Document AI platform -- which already has been used to process tens of billions of pages of documents for government agencies and the lending and insurance industries among others -- became generally available last week, along with Lending DocAI and Procurement DocAI.

The serverless Document AI platform is a unified console for document processing that allows users to quickly access Google Cloud’s form, table and invoice parsers, tools and offerings -- including Procurement DocAI and Lending DocAI -- with a unified API. It uses artificial intelligence/machine learning (AI/ML) to classify, extract and enrich data from scanned and digital documents at scale, including structured data from unstructured documents, making it easier to understand and analyze.

Doc AI solutions feature Google technologies including computer vision, optical character recognition and natural language processing, which create pre-trained models for high-value and -volume documents, and Google Knowledge Graph to validate and enhance fields in documents.

Research and advisory firm Gartner predicts AI will be the top category that determines IT infrastructure decisions by 2025, driving a tenfold growth in compute requirements. Half of all enterprises will have AI orchestration platforms by 2025 to operationalize AI, according to Gartner, up from less than 10 percent in 2020.

Google Cloud’s industry-specific Lending DocAI is designed to reduce the time – from weeks to days -- and cost of closing loans for the mortgage industry by automating mortgage document processing. It processes borrowers’ income and asset documents using specialized ML models and now has more specialized parsers for documents including paystubs and bank statements.

Procurement DocAI enables companies to automate procurement data capture at scale, turning unstructured documents such as invoices and receipts into structured data. Google Cloud recently added a utility parser for electric, water and other bills.

The new specialized parsers for Lending and Procurement DocAI can be used with Google Cloud’s AutoML Text & Document Classification and AutoML Document Extraction services.

Google Cloud also is adding Human-in-the-Loop AI, a new DocAI feature that enables human verification and corrections to help customers get higher document processing accuracy before data is used in critical business applications.

Here’s a look at nine other cloud AI services and tools.

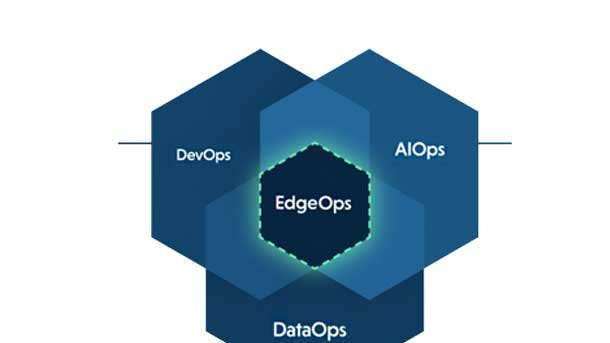

Adapdix EdgeOps Platform

Adapdix EdgeOps is a platform-as-a-service suite for edge-optimized ML/AI deployments. It combines advanced AI/ML analytics with a distributed, edge-based architecture.

The predictive analytics solution, which is based on industrial-grade data mesh technology, enables ultra-low latency and adaptive maintenance to reduce unplanned downtime, while providing automated control for self-correcting and self-optimizing actions on equipment without human intervention, according to the Pleasanton, Calif.-based Adapdix.

The six-year-old startup initially is targeting manufacturing companies in the semiconductor, electronics and automotive sectors as its platform customers.

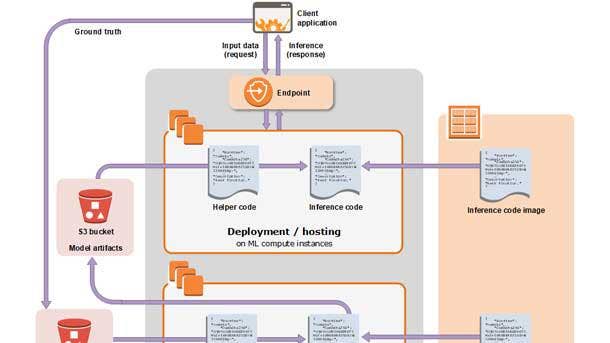

Amazon SageMaker

Amazon SageMaker is Amazon Web Services’ (AWS) flagship, fully managed ML service that data scientists and developers can use to quickly build and train ML models and deploy them into production-ready hosted environments.

Introduced in November 2017, SageMaker is one of the fastest growing services in AWS history, with tens of thousands of active, external customers using it each month, according to the company.

SageMaker includes purpose-built tools for each step of the ML development lifecycle, including labeling, data preparation, feature engineering, statistical bias detection, auto ML, training, running, hosting, explainability, monitoring and workflows. It provides an integrated Jupyter authoring notebook instance for easy access to data sources for exploration and analysis, common ML algorithms optimized to run efficiently against extremely large data in distributed environments, and native support for bring-your-own algorithms and frameworks for flexible distributed training options. SageMaker supports TensorFlow, PyTorch, MXNet and Hugging Face.

AWS has launched more than 50 new SageMaker capabilities in the last year. At its re:Invent conference in December, AWS unveiled SageMaker Feature Store, a fully managed repository to store and share ML features, which are the attributes or properties models use during training and inference to make predictions; SageMaker Clarify to help developers detect bias in ML models and understand model prediction; and SageMaker Pipelines, described as the first purpose-built CI/CD service for ML. It also introduced Amazon SageMaker Data Wrangler, a SageMaker Studio feature that provides an end-to-end solution to import, prepare, transform, feature engineer and analyze data; and SageMaker Edge Manager, which provides model management for edge devices, allowing users to optimize, secure, monitor and maintain ML models on fleets of edge devices such as smart cameras, robots, personal computers and mobile devices.

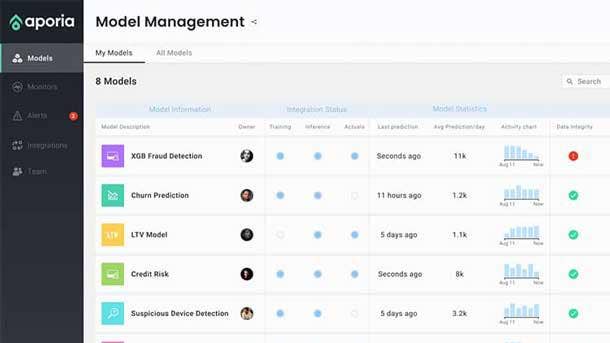

Aporia Monitoring Platform For ML

Tel Aviv, Israel-based Aporia emerged from stealth mode this month with its customizable monitoring platform for ML models that supports private and public clouds.

Aporia’s platform allows data scientists to quickly and easily create their own advanced production monitors to track their ML models’ performance, ensure data integrity and provide responsible AI, according to the startup, which was founded in 2019. It can be installed with a few lines of code and monitors asynchronously, handling workloads of billions of daily predictions without impacting latency, the company said.

The Aporia dashboard provides a single pane of glass for checking ML production status and continuously compares actual performance with expected results. Users can choose the behaviors that they want Aporia to proactively monitor -- including type mismatch, data and prediction drift, model staleness and custom metric degradation -- and an alert engine sends out notifications of issues.

C3 AI Ex Machina

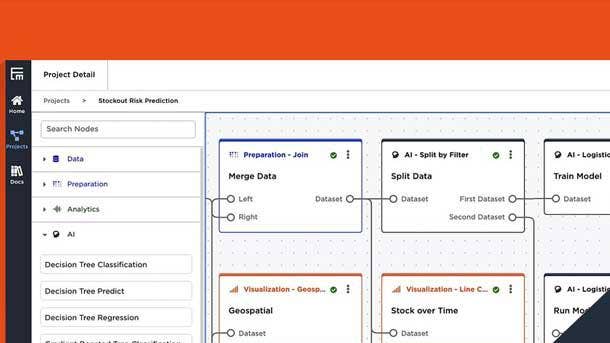

C3 AI Ex Machina is a cloud-native predictive analytics application that allows users to rapidly integrate data and develop, scale and produce AI-based insights without writing code.

The Redwood City, Calif.-based C3 AI, which specializes in enterprise AI software, released the new end-to-end, no-code AI solution into general availability in January.

C3 AI Ex Machina allows users to rapidly and flexibly access and prepare petabytes of disparate data with prebuilt connectors to data sources such as Snowflake, Amazon S3, Azure Data Lake Storage and Databricks; build and manage AI models using an intuitive drag-and-drop interface supported by AutoML; and publish predictive insights to enterprise systems or custom business applications.

Customer use cases include customer churn prevention, supplier delay mitigation, asset reliability prediction and fraud detection.

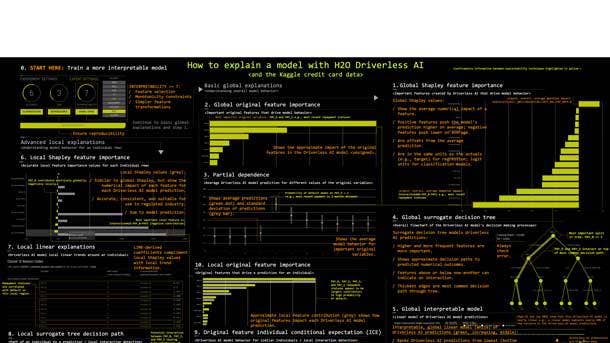

H2O Driverless AI

H2O Driverless AI is an AI enterprise platform for automatic ML that’s designed for the rapid development and deployment of predictive analytics models.

It automates some of the most difficult data science and ML workflows, such as feature engineering – employing a library of algorithms and feature transformations to automatically engineer new, high-value features for a given dataset --model validation, model tuning, model selection and model deployment, according to Mountain View, Calif.-based H20 AI, which specializes in open source AI and ML. The platform also offers automatic visualizations and ML interpretability.

H2O Driverless AI can be customized and extended with more than 130 open-source recipes. It runs on commodity hardware and also was specifically designed to take advantage of graphical processing units (GPUs), including multi-GPU workstations and servers such as IBM’s Power9-GPU AC922 server and the NVIDIA DGX-1 for faster training.

IBM’s Watson Assistant

With IBM’s Watson Assistant, users can build their own branded live chatbot or virtual “assistant” and integrate it into devices, applications or channels -- including webchats, voice calls and messaging platforms -- to provide customers with fast, consistent and accurate answers.

Watson Assistant uses AI to understand questions asked by customers in natural language and learns from customer conversations and ML models custom-built from the company’s data to improve its ability to resolve customer issues in real time on the first pass. Companies can use ML to discover common topics in their customer chatlogs, for example, to train the assistant.

Watson Assistant can be deployed on the IBM, AWS, Google and Microsoft Azure clouds or on-premises with IBM Cloud Pak for Data.

Microsoft’s Azure Cognitive Search

Azure Cognitive Search is an AI-powered cloud search service for mobile and web app development developed by Microsoft.

The fully managed, platform-as-a-service offering has built-in vision, language and speech cognitive skills, and allows developers to integrate their own custom ML models to get insights from content. Its AI capabilities include optical character recognition, key phrase extraction and named entity recognition.

Azure Cognitive Search’s new semantic search capability, integrated into the query infrastructure and available as of March, uses deep learning models to understand user intent and contextually rank relevant search results based on that intent rather than just keywords.

Built-in indexers are available for Azure Cosmos DB, Azure SQL Database, Azure Blob Storage and Microsoft SQL Server hosted in an Azure virtual machine. Azure Cognitive Search works with file formats including Microsoft Word, PowerPoint and Excel, Adobe PDF, PNG, RTF, JSON, HTML and XML.

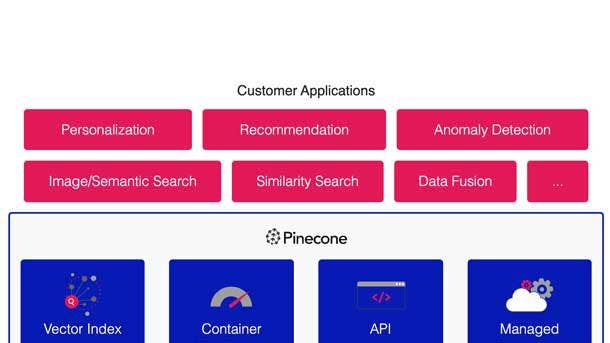

Pinecone Vector Database

Pinecone Systems, a Sunnyvale, Calif.-based ML cloud infrastructure startup, exited stealth mode in January, launching a serverless vector database for ML.

The cloud-native database makes large-scale, real-time inference as easy as querying a database, according to the company, which was founded by CEO Edo Liberty, the former director of research at AWS and head of Amazon AI Labs who also managed the AWS group building algorithms used by Amazon SageMaker customers.

The Pinecone database answers complex queries over billions of high-dimensional vectors in milliseconds, according to the Sunnyvale, Calif.-based company. It supports large-scale production deployments of real-time applications such as personalization, semantic text search, image retrieval, data fusion, deduplication, recommendation and anomaly detection.

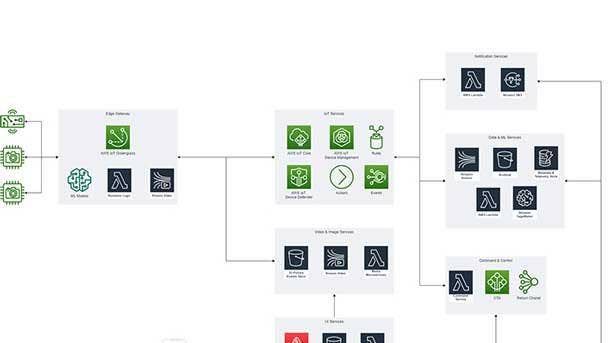

TensorIoT’s SafetyVisor

Irvine, Calif.-based TensorIoT’s SafetyVisor is an easy-to-use, ML-powered IoT solution for automatically monitoring compliance with social distancing policies.

SafetyVisor uses computer vision to monitor the space between people, compensating for scene geometry to estimate distance, and sends real-time alerts, including images, when social distancing violations occur. It can detect and send alerts when workers and guests are not wearing face masks. It also has a space utilization function that tracks people’s movement over time and creates heatmaps, allowing for data-driven cleaning and disinfecting in high-traffic areas.