7 Things to Know About AWS ML: Swami Sivasubramanian

‘Advancements in machine learning, fueled by scientific research, abundance of compute resources and access to data, has … meant that machine learning is going mainstream,’ says Sivasubramanian, vice president of artificial intelligence and machine learning at Amazon Web Services. ‘We see this in our customers’ adoption of the machine learning technology.’

Machine learning is one of the most transformative technologies of this generation, but the tech community only is “scratching the surface” when it comes to what’s possible, according to Swami Sivasubramanian, vice president of artificial intelligence and machine learning at Amazon Web Services.

Machine learning is transforming everything from the way business is conducted to the way people entertain themselves to the way they get things done in their personal lives.

“Entire business processes are being made easier with machine learning,” Sivasubramanian said during AWS’ Machine Learning Summit last week. “Marketers can more easily tailor their message. Supply chain analysts can have faster and more accurate forecasts. And manufacturers can easily spot defects in products.”

And the barriers to entry have been significantly lowered, enabling builders to quickly apply ML to their most pressing challenges and biggest opportunities.

“ML is improving customer experience, creating more efficiencies in operations and spurring new innovations and discoveries like helping researchers discover new vaccines and enhancing agricultural output with better crop monitoring,” Sivasubramanian said. ”But we are just scratching the surface about what is possible, and there is so much invention yet to be done. Accelerating adoption of ML requires bright minds to come together and share learnings, advances and best practices.”

Research firm Gartner named AWS a "visionary" in its 2021 Magic Quadrant for Data Science and Machine Learning Platforms in March, noting Amazon SageMaker, AWS’ flagship fully managed ML service, is continuing to “demonstrate formidable market traction, with a powerful ecosystem and considerable resources behind it.”

“We built Amazon SageMaker from the ground [up] to provide every developer and data scientist with the ability to build, train and deploy ML models quickly and at a lower cost by providing the tools required for every step of the ML development life cycle in one integrated, fully managed service,” Sivasubramanian said. ”For expert machine learning practitioners, researchers and data scientists, we focus on giving a choice and flexibility with optimized versions of the most popular deep learning frameworks, including PyTorch, MXnet and TensorFlow, which set records throughout the year for the fastest training times and lowest inference latency. And AWS provides the broadest and deepest portfolio of compute, networking and storage infrastructure services—with the choice of processes and accelerators—to meet our customers’ unique performance and budget needs for machine learning.”

Read on to find out what more Sivasubramanian had to say about AWS’ and parent company Amazon.com’s work in ML and how AWS customers are leveraging it.

More than 100,000 Customers Use AWS For ML

Scientific work in ML is exploding, and the publication of scientific papers has grown exponentially over the past years, with an estimated 100-plus published per day, according to Sivasubramanian.

“Advancements in machine learning, fueled by scientific research, abundance of compute resources and access to data, has also meant that machine learning is going mainstream,” Sivasubramanian said. ”We see this in our customers’ adoption of the machine learning technology. More than 100,000 customers use AWS for machine learning, and machine learning is enabling companies to reinvent entire tranches of their business.

Those customers include Basel, Switzerland-based Roche, the world’s second largest pharmaceutical company, which uses Amazon SageMaker to accelerate the delivery of treatments and tailor medical experiences.

To help its customers “cure choice paralysis” by offering tailored content suggestions, the Discovery+ streaming service uses Amazon Personalize, an ML service—based on the same technology used at Amazon.com—that makes it easy for developers to add individualized, real-time recommendations for customers using their applications. Real-time analyses features of Contact Lens—an Amazon Connect feature that allows users to analyze conversations between customers and agents by using speech transcription, natural language processing and intelligent search capabilities—is used by The New York Times to respond to customer issues in the moment. And automaker BMW Group is using Amazon SageMaker to process, analyze and enrich petabytes of data to forecast the demand of vehicle model makes and individual equipment on a worldwide scale.

“We can see right before our eyes that machine learning science is translating to real customer adoption, and that’s not by accident,” Sivasubramanian said, noting Amazon’s customer-obsession leadership principle of working backward from customers’ problems to develop technology to address them.

“Our teams of researchers are usually embedded in the business, so science goes directly to the customer,” he said. ”Researchers that come to AWS and Amazon want to help create powerful innovations that impact millions of people and make machine learning accessible to every organization.”

An ML Prerequisite And A Hurdle

Training data is a prerequisite to all ML, and there’s a need to learn with less of it, according to Sivasubramanian. While humans are incredibly good at learning from a few data samples, ML still requires a lot of data.

“A few years ago, when my daughter was 2, she could easily learn the difference between an apple and an orange with just a few examples,” Sivasubramanian said. ”On the other hand, a machine learning model might have needed hundreds of labeled pieces of data to reliably identify between an apple and an orange.”

The process of data labeling is a time-consuming and labor-intensive process, and as more and more companies want to use ML, accessing vast troves of data and annotating it is too tedious and expensive to scale, he said.

The National Football League, for example, wanted to use computer vision to more easily and quickly search through thousands of media assets, but the manpower to tag all the assets at scale was time- and cost-prohibitive, according to Sivasubramanian. Computer vision allows machines to identify people, places and things in images with accuracy at or above human levels with greater speed.

Swedish company Dafgards, a family-owned frozen foods business, wanted to use more intelligent quality control in its pizza making. The company partnered with AWS to build an automated ML system to do visual quality inspection, because its 12-member IT team had limited ML expertise.

“To solve this problem for our customers, our team of scientists are investing in a technique called few-shot learning, and they wanted to bring few-shot learning to our services,” Sivasubramanian said.

Few-Shot Learning

Few-shot learning tries to replicate the human ability to learn a specific task from just a few examples by incorporating previous knowledge, according to Sivasubramanian.

“For instance, if you know how to add, you will learn how to multiply faster,” he said. ”It’s a different operation, but the same underlying framework. Now we have taken this cutting-edge technique, made optimizations and incorporated it into our services, so that our customers can create models custom to their own use cases with very little data.”

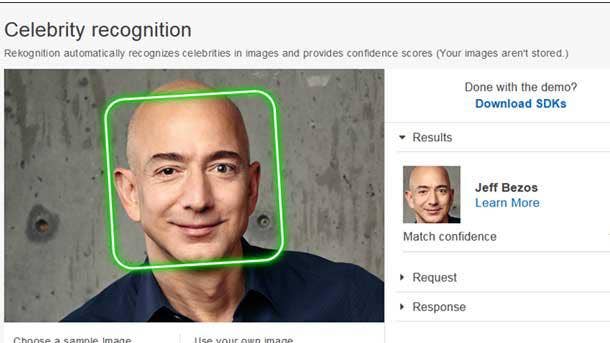

AWS uses few-shot learning in the Custom Labels feature of Amazon Rekognition, its service for image and video analysis that allows users to identify objects and scenes and images that are specific to their needs. Custom Labels requires at least 10 images per label to train and test a model to analyze images.

“The NFL uses the Custom Labels feature to apply detailed tags for players, teams, objects, action, jerseys, location and more to their entire photo collection in a fraction of time it took them previously,” Sivasubramanian said.

For industrial applications, AWS uses few-shot learning in Amazon Lookout for Vision, its ML service for automated quality inspection that spots visual defects and anomalies in industrial products using computer vision.

“Customers like Dafgards can start to identify quality defects in industrial processes with as few as 30 images to train the model—0 images of defects or anomalies plus 20 normal images,” Sivasubramanian said.

Building Lookout For Vision

AWS had an interesting challenge when building Amazon Lookout for Vision, which launched in preview in December and general availability in February.

“Because modern manufacturing systems are so finely tuned, defect rates are often 1 percent or less, and they are typically very slight defects,” Sivasubramanian said. ”As a result, the data we needed to train the algorithms that power Lookout for Vision should, as much as possible, reflect the reality of having a small percentage of defects. But there are not just obvious defects, but slight or nuanced defects.”

AWS scientists and engineers working on the project realized early on that the sample defects that they were training models on didn’t match the shop floor reality, so they built a mock factory with conveyor belts and cameras to simulate various manufacturing environments.

“The goal was to create data sets that included normal images and objects, and then draw or create synthetic anomalies such as missing components, scratches, discoloration and other effects,” Sivasubramanian said. ”Few-shot learning allowed the team to occasionally work with no images or defects at all. That real-life, trial-and-error iterative process eventually led to the development of Lookout for Vision, which today is being used by customers like Dafgards to inspect and verify product quality.”

Text Extraction And SCATTER

Many AWS customers use ML to extract meaning from documents or images to save the time and costs associated with manual document processing. While traditional text extraction technology is really good at understanding regular text when it is clearly laid out, well-written and horizontal, it’s not as good at understanding irregular text that is blurred or where the characters aren’t aligned in a horizontal manner, according to Sivasubramanian. Results from recent state-of-the-art methods on public academic data sets show only 70 percent to 80 percent accuracy, he said.

AWS set out to solve that problem for customers in a world of faded receipts, blurry images and doctors’ handwritten notes that require analysis.

“When addressing irregular text, models today can implicitly learn language by encoding contextual information in order to infer what the word is, even when only a few letters are properly visible,” Sivasubramanian said. ”For instance, if a three-letter word begins with ‘th,’ the model will likely predict that the word is ‘the.’ However, this capability can also lead to errors, when models misinterpret contextual information or when the model struggles to understand text that isn’t an actual word—like 100 (or a) Social Security number—because in those cases, contextual information will simply be redundant.”

ML models must be able to learn when to use visual information and when to use contextual information, said Sivasubramanian, noting “this hasn’t been done well to date.”

To address that problem, AWS developed a new method called Selective Context Attentional Scene Text Recognizer, or SCATTER. Sivasubramanian used a word from a doctor’s note to explain how it works.

“With SCATTER, the image passes through an architecture that is composed of a series of stacked blocks, which model the contextual information,” he said. ”In each block … we also have a novel decoder, which helps the model learn whether to use contextual information or visual information, depending on the image itself. As it passes through each block, the model improves the encoding of contextual dependencies and thus increasingly refined the predictions, and the final prediction [the word ‘biotech’] is taken from the final block.”

The method surpasses state-of-the-art performance on irregular text-recognition benchmarks by 3.7 percent on average, according to Sivasubramanian.

“[That] doesn’t sound like a lot, but when you think about it, that’s millions of words each day that get the right prediction for our customers,” he said.

SCATTER is available in Amazon Textract, the AWS service that automatically extracts text, handwriting and data from scanned documents, and in Amazon Rekognition.

Deploying ML Services At Scale

One of the great things about working at Amazon is that engineers can deploy new ML services at scale and then improve upon them through customer feedback, according to Sivasubramanian. Many of the services that AWS offers to customers come straight from Amazon.com, which has been investing in ML for 20-plus years and delivering it to millions of consumers.

Amazon Lex, AWS’ natural language chatbot technology, is powered by the same deep learning technology that goes into Alexa, Amazon’s cloud-based AI voice service for devices. Amazon Personalize, AWS’ service for real-time personalized recommendations, is based on the Amazon recommendation system launched in Amazon’s early days and refined over decades.

“Another example where we were able to iterate and improve on a product by implementing it at scale within Amazon is Amazon Monitron, a new end-to-end system that uses machine learning to detect abnormal behavior in industrial machinery,” Sivasubramanian said. AWS announced Amazon Monitron in December.

“Each of Amazon.com’s fulfillment centers have miles of conveyor belts weaving throughout the facility, and they deploy sophisticated equipment to assist employees to pick, pack and ship thousands of customer orders every day,” Sivasubramanian said. ”If the equipment fails or requires unplanned maintenance, it can have a huge impact on our operations, so it is the perfect environment for us to pressure test Amazon Monitron.”

AWS used an Amazon.com fulfillment center in Germany as a testbed, installing 800 sensors to catch instances of abnormal vibrations on multiple conveyor belts and alert technicians of potential issues.

“Through this process, we learned a lot and iterated together on a variety of capabilities, including how to reduce false alerts, improving the sensor commissioning user experience and developed a better understanding of the optimal range of a sensor to a gateway,” Sivasubramanian said.

In the next 12 months, Amazon will be installing tens of thousands of Monitron sensors and thousands of Monitron gateways across dozens of Amazon.com fulfillment centers worldwide to help reduce unplanned equipment downtime and improve the customer experience.

Industry-Specific AI Services

For developers and increasingly business users, AWS is building AI services to address common horizontal and industry-specific use cases to easily add intelligence to any application without needing ML skills. They include Amazon Textract, Amazon Rekognition, Amazon Lookout for Vision and Amazon Monitron.

“We embed AutoML in these AI services so that customers don’t need to worry about data preparation, feature engineering, algorithm selection, training and tuning, inference and model monitoring,” Sivasubramanian said. ”Instead, they can remain focused on their business outcomes. These services help customers do things like personalize the customer experience, identify and triage anomalies in business metrics, image recognition, automatically extract meaning from documents and more.”

AWS also has built a suite of solutions for the industrial sector that use visual data to improve processes and services that use data from machines for predictive maintenance. In health care, it has purpose-built solutions for transcription, medical text comprehension and Amazon HealthLake, a new HIPAA-eligible service to store, transform, query and analyze petabytes of health data in the cloud.