How Intel Helps Partners Use AI, Vision Solutions With Regular CPUs

'It's a massive deal because the install base is so big,' says Intel IoT ecosystem general manager Steen Graham in an interview about how channel partners can deploy computer vision solutions at the edge with regular CPUs using Intel's OpenVINO toolkit.

Using OpenVINO To Deploy AI At The Edge

For Intel channel partners looking to deploy computer vision and artificial intelligence solutions in edge devices, the semiconductor giant wants to make something clear: partners can build such applications on top of existing infrastructure with regular CPUs.

This is made possible by OpenVINO, a developer toolkit released by Intel a year ago that gives developers easy ways to optimize and deploy trained neural networks — systems designed to function like the human brain — on Intel's processor technologies for visual inference applications and beyond.

[Related: Intel's New IoT Sales Chief Talks 'Customer Obsession,' New Hardware]

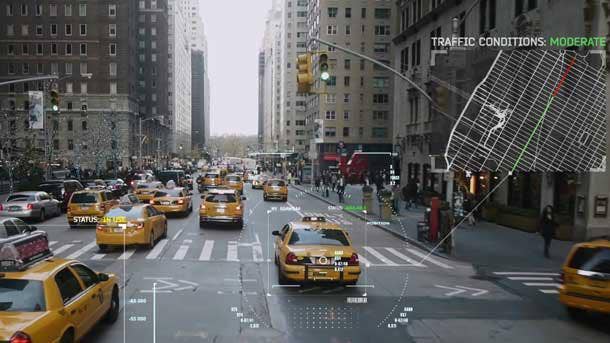

Since its initial release, OpenVINO — which stands for Open Visual Inference and Neural Network Optimization — has been used by companies for a range of use cases. Philips Medical, for instance, is using OpenVINO to significantly improve the performance of its bone-age-prediction models. GridSmart, on the other hand, has been able to reduce wait times at traffic intersections with the toolkit.

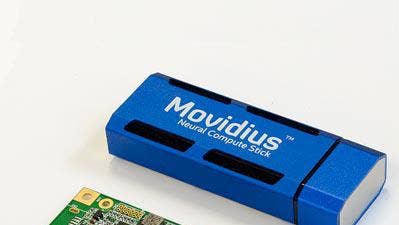

A key capability for Intel's broader channel, however, is the ability to optimize neural networks on the company's general-purpose processors. That means GPUs or Intel's special-purpose processors, like Movidius visual processor units and FPGAs, aren't required, depending on the workload.

"It's a massive deal because the install base is so big," said Steen Graham, general manager of Intel's Internet of Things ecosystem and channels, in an interview with CRN. "Getting those workloads to run on one host system is going to reduce your total cost of ownership and increase your time to deployment of convolutional neural networks."

In one example, video surveillance provider AxxonSoft developed new AI deep learning analytics capabilities for new security solutions at several World Cup stadiums. Thanks to OpenVINO, these deep learning workloads were optimized for an 8X performance boost on existing Intel Core processors and an 8.3X performance boost on existing Intel Xeon processors.

"They had physical constraints on the infrastructure that didn't have additional capacity or space," Graham said, "but they wanted to add deep learning capability to their existing surveillance technologies to the reduce the number of false alerts and [add] capabilities like license plate detection."

What follows is an edited transcript based on an interview with Graham that dives into OpenVINO's capabilities, compelling use cases, how channel partners can take advantage of the toolkit and why other kinds of Intel products, like FPGAs, are better suited for certain kinds of workloads.

OpenVINO. Can you explain what it is? And who it’s designed for?

OpenVINO is a software development kit that we launched last year that allows you to take a trained model — a trained neural network — and optimize it and deploy it on different AI accelerator technologies, such as CPUs, integrated graphics, FPGAs, VPUs. And you can do that writing in C++ or Python, and that code will just scale seamlessly from a sub-three-watt AI accelerator VPU [visual processing unit] technology, like our Neural Compute Stick 2, all the way up to our Xeon product line. So [it covers] a range of power-performance form factor envelopes, and it's targeted for enterprise developers that are looking to deploy neural networks in production environments.

How does this play into more traditional channel partners? What good could this do for them?

One of the trends in the market is this trend towards edge computing, and the reason customers are moving to edge computing is really driven by some fundamental tenets of the laws of physics and latency requirements, the economics of edge computing, the fundamental challenge around security and privacy and being compliant with the regulatory environment there, and then enabling capabilities like persistent connectivity and having that type of fault tolerance.

And so what's happening is as companies move towards edge computing, they're finding one of the applications they can deploy, it is artificial intelligence. And the largest data type, if you look out in a few years, we'll see over 80 percent of IP traffic will be video data. And if you put the analytics close to the source of the data, you have a great opportunity to extract value and insights. In doing so, what the OpenVINO toolkit allows you to do is deploy neural networks at the edge.

And so for our traditional channel customers, that allows them to work with ISV partners and developers on OpenVINO and our ecosystem around that and deploy new technologies to their end users. And what's really exciting about OpenVINO is that it allows you to deploy neural networks on existing Intel systems. So maybe you did a deployment last year, and you come back to that customer and say, "Hey, I know we did a security deployment of NVR [network video recorder] and cameras. What do you think about adding additional features like license plate detection, motion detection, facial recognition?" And with the OpenVINO toolkit, you can go to those existing deployments and offer new capabilities with optimized AI inference performance.

And one of the really fun things about the OpenVINO toolkit is we offer pre-trained models as well. So if you really want to demonstrate capability quickly, you're able to leverage our pre-trained models. And we have pre-trained models that range from facial detection to smart classroom to traffic analytics and much more within the toolkit. So it's a great capability for the traditional channel partners that have done deployments and are working with customers and are saying, "How can I add artificial intelligence to solve some additional problems for my customers?"

What are some of the most compelling real-world uses you've seen for OpenVINO so far?

One of the things I've mentioned several times is medical imaging, and it just so happens that the data on medical images is growing at a much faster rate than the number of radiologists employed. And how do we get critical information to the doctor and the patient as soon as possible? Adding something like artificial intelligence to medical imaging and then pairing that with medical professionals to assess the diagnosis enables them to do their job much faster. And that's a really compelling use case.

What's really inspiring is if you look at the Neural Compute Stick, people are tremendously excited about solving major medical challenges. So we've had developers use OpenVINO to develop skin cancer detection. We've had developers enable OpenVINO to do something like acute myeloid leukemia detection and prototype that capability out. So from the leading medical imaging companies in the world to innovative developers, we've seen tremendous work on that medical imaging use case.

I think that the other area that I'm really passionate about — nobody likes waiting at intersections and optimal traffic flow is something I think we're all passionate about, and we've seen a number of deployments of OpenVINO to enable smarter traffic flow. So for example, we've worked with GridSmart to enable intersection actuation with AI in cities like Bangkok that have really difficult traffic challenges, and we've seen a reduction in average [queues or wait times] by over 30 percent.

So using computer vision in medical imaging, using it across transportation and smart cities use cases are a couple of great examples. We've also enabled it working with a partner across telecom and in use cases that enable trains to do things like crossroad detection, pedestrian detection, vehicle identification on crossroads — and then enable people to find seats easier in the train [with] an on-train empty seat detection solution, so if you've got a really busy train, and you want to find a place to sit, computer vision can help you with that as well.

Have there been instances in the real world where someone can take a company's existing IT infrastructure then use OpenVINO to add computer vision capabilities?

One of the areas that the ecosystem and many end users are excited about is they get so much performance improvement just in software [with OpenVINO]. And so if you look at, for example, we were working with Philips Medical on a bone-age-prediction model, and we actually were able to improve the performance of that bone-age-prediction model 188 [times] with the OpenVINO toolkit, all in software. Now, bone age prediction is done on x-rays. If you looked at these lung segmentation models that we worked on CT images with Philips, we got over 30X performance improvement there.

You'll find that a lot of companies get outstanding performance improvement in software. In regards to deployments of use cases on existing technologies, one additional example is if you look at the World Cup, in 10 out of 12 stadiums [where World Cup matches were held], AxxonSoft deployed OpenVINO on existing infrastructure. They had physical constraints on the infrastructure that didn't have additional capacity or space, but they wanted to add deep learning capability to their existing surveillance technologies to reduce the number of false alerts and [add] capabilities like license plate detection. And we were able to do that by adding OpenVINO on existing infrastructure. So certainly we've seen that response and tremendous feedback from the market in implementing on existing infrastructure.

How big of a deal is it that OpenVINO allows you to use convolutional neural networks with general-purpose CPUs and integrated graphics?

It's a massive deal because the install base is so big, and then the economics of running on the host processor you're going to deploy for your application node and then pairing a new capability with that. So getting those workloads to run on one host system is going to reduce your total cost of ownership and increase your time to deployment of convolutional neural networks. One thing we talked about last year is how OpenVINO is really targeted for computer vision and convolutional neural networks that enable AI inference optimizations. However, we've added new capabilities over the last year like LSTM [long short-term memory] models. And with LSTM optimizations, you can do things like text and speech recognition, so we continue to expand that portfolio of capabilities with OpenVINO, and that's another thing that the ecosystem has been really excited about.

We just released our first release of this year, and we added new LSTM models. We have over a hundred networks optimized on OpenVINO. We added new operating system support. So, for example, supporting Mac OS on our CPUs with OpenVINO (obviously, there's a lot of developers that use Macs for development). So we just we continue to enable new capabilities with that toolkit and expand the coverage in in optimizations for AI and complementary technologies. Of course, we're working with all the major frameworks as well, Tensorflow, PyTorch, MXNet, Caffe and more.

OpenVINO just keeps getting better with every release. I'll just pile on to my excitement: we launched our latest Xeon processor line [at the beginning of April], and that is supported by OpenVINO. We had another leading company in the medical imaging sector, Siemens, showcase our latest Xeon processor optimized with OpenVINO for medical imaging use cases. Now we're showcasing performance on Philips, Siemens and GE Healthcare applications.

What do you get out of OpenVINO using a CPU with integrated graphics versus OpenVINO using a Movidius VPU or FPGA?

So what we find is, optimizing the host system CPU and integrated graphics performance is optimal for a lot of use cases, and you can get a lot of performance improvement with the OpenVINO toolkit in software without applying new hardware. However, if you have models that are optimized for convolutional neural networks, for example, some of them might run better from a performance-per-watt envelope on our VPU technology. And so you might want to use one of our vision accelerator cards, and the incredible thing here is you can take a Neural Compute Stick, you can buy it online, you can start prototyping on the Neural Compute Stick, and you can scale all the way up to eight VPUs. So moving from 10 frames per second on your model to hundreds of frames per second on your model, we can give you that scalability, and there's going to be certain neural networks that are optimized to perform really well on VPU technology. That's going to be really compelling, certainly if you look at the performance-power envelope, and we offer that in many different form factors as well. So M.2 form factors, many PCIe form factors, half PCIe form factors to complement that USB form factor you can start developing on today.

And maybe you want the ultimate flexibility, and you really like the capabilities of an FPGA. And so what you'll find with FPGAs are fantastic if you got a really big model footprint and then you want that ultimate flexibility. And so one of the incredible things about OpenVINO toolkit is we're really democratizing the development of AI and FPGAs by making it really easy and using standard programming languages such as C++ and Python. You don't need to be a deep-trained FPGA developer. You get the benefits of an FPGA, get your big memory model in that FPGA and start running it and get great flexibility from that as well. So, really what we find is that the neural networks range, the use cases range, and having a scalable hardware portfolio across performance and power envelopes and feature capabilities is something that developers are demanding.

Does the Omnitek acquisition have any implications for what Intel can offer with OpenVINO?

The acquisition enables us with new capabilities and skill sets around media. I think one of the things we haven't really talked about is that OpenVINO is enabling inference optimization, but it pairs really well with optimized media transcoding. So one of the capabilities within the OpenVINO toolkit is we have our media SDK, and that's enabling media transcoding on our integrated graphics. And so if you're doing something like transcoding an H.264 [format], and you want to optimize performance there, and then you want to go run AI inference on that image, you can do that seamlessly by pairing those technologies.

And so I think what you'll find more from Intel is you'll continue to see those enhancements of media optimization with AI inference optimization, especially as you look out in time, you'll see in a couple of years that 80 percent of IP traffic will be video. We've just got to make it seamless and efficient to transcode that video and then apply deep learning on that video.