AWS re:Invent 2018: 21 New Ways To Connect Storage To AWS

The cloud is key to data protection, disaster recovery and archiving, and these 21 vendors are at AWS re:Invent to show how they are helping partners and customers utilize AWS as a cornerstone of their storage infrastructures.

Storage Front And Center At AWS re:Invent

The AWS re:Invent conference is an important event for learning not only the latest information about how to work with Amazon Web Services, but also to see how everyone in the AWS ecosystem is helping customers and channel partners take advantage of the public cloud.

And that includes a huge contingent of storage and data management vendors that are showing new ways to use the AWS cloud to manage data protection, archiving and disaster recovery as well as to prepare and manage data for use in a wide range of cloud-based workloads.

CRN highlights 21 storage-focused exhibitors that are either showing new storage hardware or software offerings aimed at the AWS ecosystem for the first time or have introduced them in the past few months.

Here’s how these vendors are helping partners connect storage to AWS.

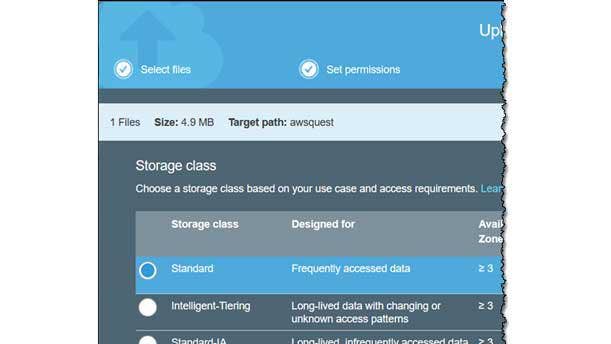

Amazon S3 Intelligent-Tiering

Amazon opened its AWS re:Invent conference with the introduction of the S3 Intelligent-Tiering class of cloud storage, a new automated storage tiering capability for handling files that have not been accessed for 30 days.

Previously, AWS offered four classes of service: Standard for frequently accessed data; Standard-IA for long-lived, infrequently accessed data; One Zone-IA for long-lived, infrequently accessed noncritical data; and Glacier for long-lived, infrequently accessed, archived critical data. S3 Intelligent-Tiering is a new fifth class of service that automates movement of data between two access tiers, called frequent access and infrequent access. Data in the S3 Intelligent-Tiering frequent access tier that is not accessed for 30 days is automatically moved to the infrequent access tier to take advantage of the lower cost, but is moved back to the frequent access tier if it is later accessed.

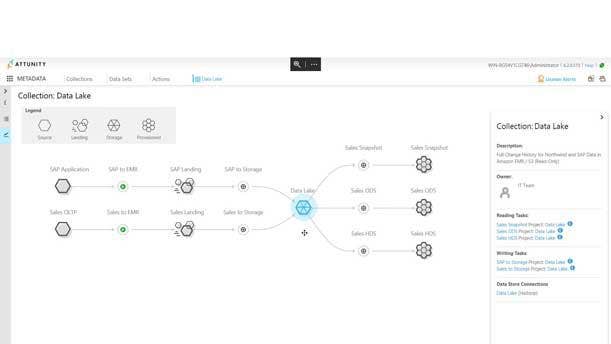

Attunity For Data Lakes

Attunity for Data Lakes helps overcome challenges enterprises experience when it comes to creating analytics-ready data sets from heterogeneous data sources by automatically generating data pipelines specific to such data sets. The Attunity for Data Lakes from Burlington, Mass.-based Attunity manages data pipes from the creation of source system data streams through delivery to Amazon S3 and through to the creation of analytics-ready data sets on AWS. Attunity helps simplify and accelerate the process followed to create data lakes in AWS with an end-to-end platform that brings together change data capture technology, heterogeneous data replication and Spark-based transformations.

Cloud Daddy Secure Backup Version 1.3

Cloud Daddy Secure Backup 1.3 from Princeton, N.J.-based Cloud Daddy builds on its prior cross-region and cross-account backup, restore and disaster recovery orchestration capabilities with the addition of AWS GuardDuty intelligent threat detection. A new dashboard provides alerts of malicious IP address ranges and maps of those alert locations. Other new additions include enhanced backups and restores of Aurora, Redshift and DynamoDB clusters; granular control of instance and volume restoration options; and a new module with backup statistics on the backup dashboard. In addition, jobs and schedules can be created immediately from the dashboard, with pie charts displaying detailed protected/unprotected instances. To better manage AWS backup costs, it also includes real-time cost estimates of backup jobs as they are being created together with a consolidated total cost estimate for all existing backup jobs.

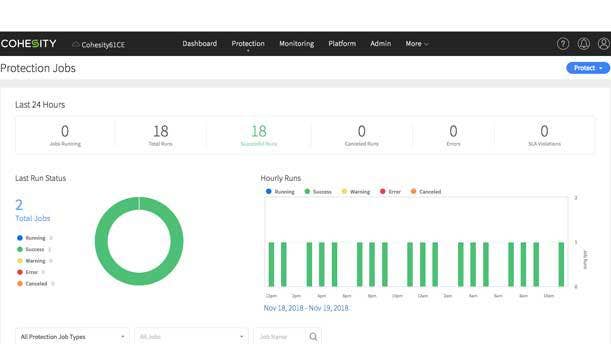

Cohesity Expanded Solutions Powered By AWS

Cohesity’s new solutions, powered by AWS, lets customers combine cloud-native backups, full life-cycle disaster recovery and long-term data retention on a single platform. The San Jose, Calif.-based secondary data storage technology developer's new offerings also include increased automation to cloud-based backup and recovery processes, leveraging Amazon Elastic Block Store snapshot APIs to protect cloud-native virtual machines, and Cohesity DataProtect auto-discovery and auto-protect to ensure that backups scale automatically as cloud-based workloads are added or deleted. This integration will help customers leverage AWS for full life-cycle disaster recovery with failover and failback capabilities. It will also provide support for AWS Snowball within the Cohesity platform, offering customers an easy way to handle data migration.

Ctera Networks HC Series Edge Filer

New York-based Ctera's new HC Series filers provide an alternative cloud-enabled appliance that can seamlessly replace legacy NAS infrastructure at remote and branch offices. The new Edge Filers, designed for evolving branch IT environments, offer data center-scale capacity of up to 96 TB at the edge with smart caching technology that tiers large or infrequently accessed files to the cloud for cost-effective storage. The HC series supports up to 5,000 users, with an all-flash option for enterprises seeking higher performance. The HC Series’ 10GbE interface can spin multiple virtual machines on the same device, a key requirement of large enterprises. The filers offer scalability, performance and flexibility in deploying remote office and branch office file services while helping customers transition to the cloud.

Dataguise DgSecure 6.4

DgSecure from Fremont, Calif.-based Dataguise gives customers a data-centric governance offering to detect, audit, protect and monitor sensitive data in real time across the enterprise and the cloud. DgSecure 6.4 lets channel partners support the GDPR and CCPA customer compliance requirements across AWS storage repositories, including Amazon Redshift, Amazon Relational Database Service and Amazon S3. It includes expanded GDPR platform support and streamlined flow of Right of Access and Right to Erasure across data platforms, unique and total counts of sensitive information detected for use in GDPR breach reporting, a new GDPR view within the DgSecure dashboard, enhanced isolation for multiple groups within multitenant environments, and a new built-in scheduler for GDPR compliance procedures.

Datrium CloudShift

Datrium CloudShift is a SaaS-based disaster recovery orchestration service for VMware that provides simple operation, low recovery point objectives, low AWS cloud cost, and end-to-end readiness assurance. The technology, from San Mateo, Calif.-based Datrium, helps customers develop hybrid cloud disaster recovery infrastructures by focusing on Datrium’s single data stack and with a simple, SaaS-based operation. It helps eliminate vendor finger-pointing, and provides end-to-end resource health checks to help ensure successful disaster recoveries. CloudShift supports on-demand hybrid cloud as well as on-premises-only configurations.

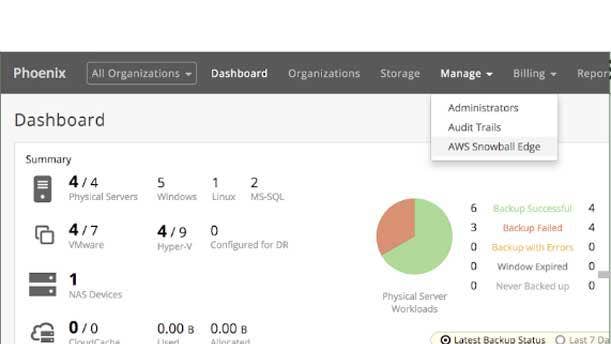

Druva Cloud Platform On AWS Snowball Edge

AWS Snowball Edge is a data migration and edge computing device with 100 TB of capacity and support for computing tasks via built-in Amazon EC2 and AWS Lambda function capabilities. Sunnyvale, Calif.-based Druva takes advantage of AWS Snowball Edge to facilitate the fast transition of data to and from the cloud. Enterprises gain workload mobility along with advanced cloud services like disaster recovery and replication to help improve their overall business continuity SLAs without the need for dedicated appliances or secondary storage devices. Druva and AWS partnered to pre-package Druva’s cloud data management technology on the appliances, which will be delivered directly from AWS to the customer and give businesses an as-a-service offering with simplified provisioning and management at no additional cost.

Hammerspace

Hammerspace is a SaaS company delivering unstructured data across the hybrid cloud. The Los Altos, Calif.-based company's technology provides a workspace for data with no lock-in from storage silos. It offers a storage-agnostic and protocol-agnostic hybrid cloud data control plane that abstracts data from the infrastructure for self-service hybrid cloud data management driven by machine learning and metadata management to deliver data-as-a-service to users. Hammerspace provides data accessibility across clouds rather than treating each cloud as a storage silo by virtualizing the data and managing it via metadata to give control and performance to the data consumer.

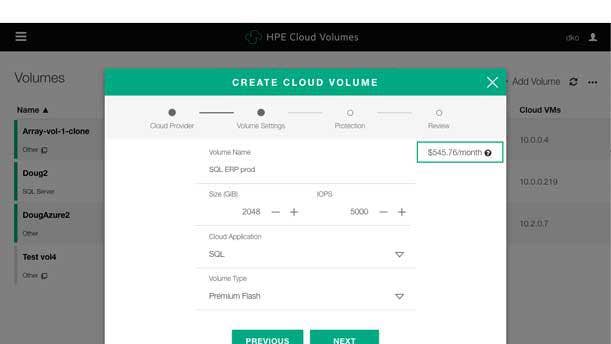

Hewlett Packard Enterprise Cloud Volumes

HPE Cloud Volumes is an enterprise-grade multi-cloud storage service for AWS and Microsoft Azure that give channel partners the opportunity to resell cloud storage services with recurring revenue or sell prepaid credits with up-front margins. The Palo Alto, Calif.-based company used AWS re:Invent to unveil major enhancements to HPE Cloud Volumes, including an expansion into the U.K. and Ireland in 2019 to service European customers requiring local cloud data access. HPE Cloud Volumes now supports leading container platforms including Docker and Kubernetes to speed DevOps and testing and development of cloud-native apps and hybrid cloud workloads. It allows containerized apps and data to be easily moved and cloned for continuous integration and development pipelines. HPE also completed SoC 2 Type 1 certification for customers with stringent compliance controls and HIPAA compliance.

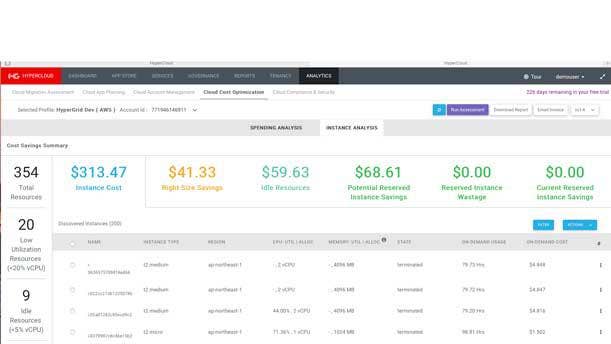

HyperGrid HyperCloud 6.0

The HyperCloud platform from San Jose, Calif.-based HyperGrid takes a proactive approach to cloud planning, cost management, governance, security and compliance by eliminating manual processes that can impede or derail cloud adoption. It offers simple setup and workflows that require just a few clicks to get actionable insight. HyperCloud 6.0 helps enterprises and MSPs navigate their cloud journey with the addition of AWS Lambda and Microsoft Azure CSP Program support; proactive disaster recovery planning with Zerto integration; cost management with Azure Reserved Instances; VMware Cloud on AWS support; and enhanced automation and turnkey compliance analysis and remediation. These updates let HyperCloud 6.0 provide organizations of any size the ability to execute cloud migration projects, continuously optimize cloud consumption, automate security and compliance, and scale cloud operations.

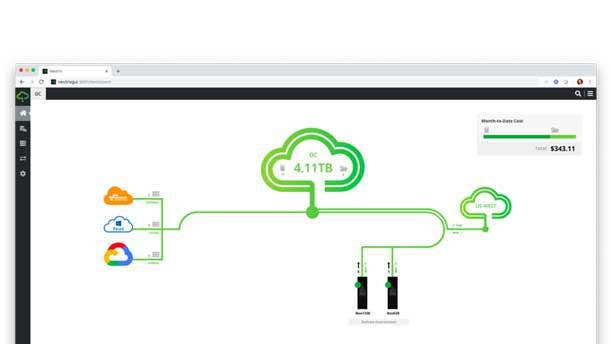

Infinidat Neutrix Cloud 2.0

Neutrix Cloud from Waltham, Mass.-based Infinidat is a public cloud storage service that offers file systems and block volumes that are simultaneously accessible from Google Cloud Platform, Microsoft Azure, AWS, IBM Cloud, and VMware Cloud on AWS compute environments. It can be employed as a stand-alone service or with on-premises InfiniBox appliances in hybrid cloud replication mode with a recovery point objective of as low as 4 seconds. The latest version lets channel partners bring customers a storage solution that includes multiple public cloud connectivity, support for HTML5 GUI, and cross-regional data replication in a single, sovereign public storage cloud designed for multi-petabyte cloud file systems and block volumes.

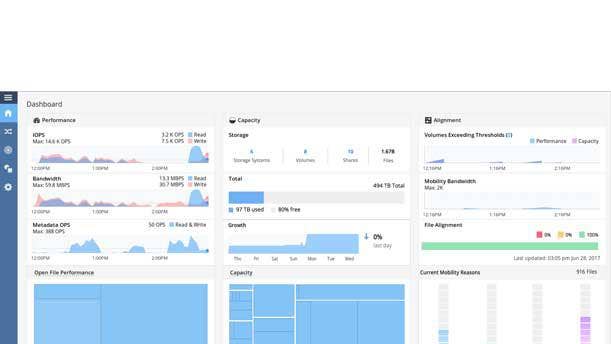

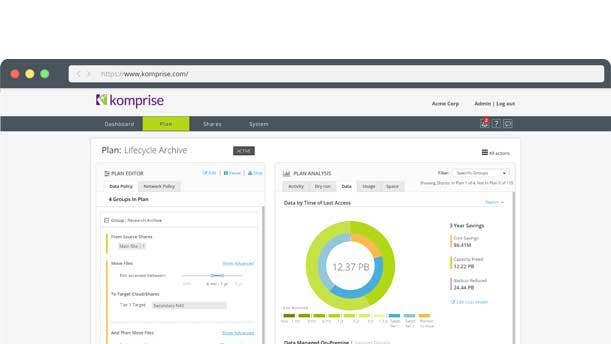

Komprise Intelligent Data Management 2.9

Komprise analyzes data growth across storage, identifies cold data, and transparently archives, migrates and replicates this data to the cloud by policy. Version 2.9 of the Campbell, Calif.-based company's offering extends these capabilities to deliver data access from anywhere as files or objects to enable new uses such as artificial intelligence and big data analytics in the cloud. Komprise moves data with full integrity including all the file data, access controls and permissions so customers can access moved data as files or objects from anywhere. This helps customers modernize their data management strategy without disrupting users or applications. Komprise is an AWS Advanced Tier Partner, and it moves data to AWS S3, S3 IA, EFS and Glacier, and works with Amazon Elastic MapReduce and Transcribe.

Micron 5210 ION Enterprise SATA QLC SSD

Micron this month started to ship its Micron 5210 ION SSD. The Boise, Idaho-based storage developer called it the world’s first SSD to go to market with quad-level cell NAND technology, which increases capacity while keeping costs down. Designed for such workloads as real-time analytics, big data, media streaming, block/object, SQL/NoSQL, and data lakes for artificial intelligence and machine learning, the Micron 5210 SSD brings those workloads both performance and capacity.

N2WS Backup And Recovery 2.4

West Palm Beach, Fla.-based N2WS, a company owned by Veeam that develops N2WS Backup and Recovery software for AWS environments, this month released version 2.4 of the software. New with the version is Amazon EBS snapshot decoupling and the N2WS-enabled Amazon repository, which the company said helps customers reduce storage costs by up to 40 percent. The new release lets users choose from different storage tiers to help reduce costs for longer retention times. Version 2.4 also eliminates the need for manual configuration during the disaster recovery processes by capturing and cloning Amazon Virtual Private Cloud, and uses an enhanced RESTful API to automate backup and recovery for business-critical data.

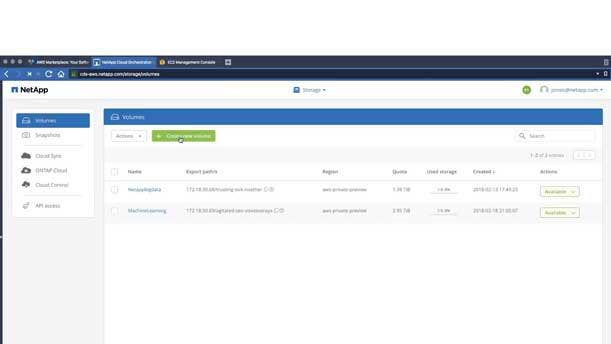

NetApp Cloud Volumes For AWS

NetApp Cloud Volumes for AWS provides proven NFS and SMB data management services for AWS. Sunnyvale, Calif.-based NetApp said the offering enhances DevOps flexibility with REST API support and preview for Cloud Backup service, and provides enterprise-class services to cloud applications to let workloads to run in the cloud with speed and predictability. NetApp Cloud Volume for AWS also supports data lakes with multiple data sources and simultaneous multi-application data sharing to help eliminate the need to rearchitect file-based applications for the cloud, as they can be moved to the cloud without development cycles. Channel partners can use NetApp Cloud Volumes for AWS to move to subscription-based revenue, offering an attach opportunity when reselling AWS cloud storage while helping customers move from on-premises to the cloud.

Pure Storage Cloud Data Services

Pure Storage Cloud Data Services is a suite of new products that run natively on AWS. Mountain View, Calif.-based Pure Storage is wrapping Cloud Block Store, CloudSnap and StoreReduce in the suite. Pure Storage’s Cloud Block Store is a cloud block storage offering that allows mission-critical applications to run in the cloud, with new capabilities for web-scale applications. CloudSnap is a cloud-based data protection offering built into Pure Storage’s FlashArray flash storage array. StorReduce, which Pure Storage acquired this summer, is an object storage deduplication engine designed to help cloud object storage replace tape for data protection. The new Pure Storage Cloud Data Services are purpose-built for cloud storage optimized seamlessly for the AWS environment.

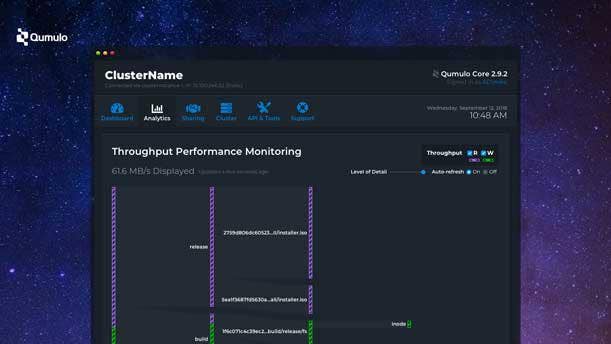

Qumulo Software

Qumulo's new software innovation delivers intelligent performance capabilities aimed at transforming the media and entertainment industry. The Seattle-based storage software developer said its software removes storage network limitations that impact film and video production studios with high-performance, centralized uncompressed 4K and 6K video playback solutions that help reduce the need for costly SANs, client-side software, host-bus adapters, and Fibre Channel switches and cabling. The result is an all-flash storage environment that works in 100 percent Ethernet-based environments, which Qumulo said will increase the number of editors that can be supported while increasing effective collaboration and lowering the economics of video editing, visual effects and video distribution.

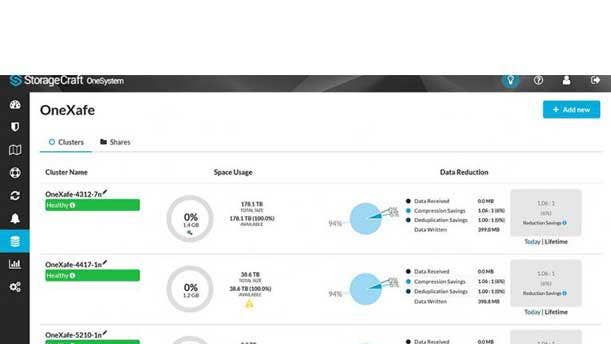

StorageCraft OneXafe

OneXafe from Draper, Utah-based StorageCraft is a converged scale-out object-based storage and data protection platform that handles both primary and secondary storage, protects both physical and virtual environments, and provides the ability to replicate to StorageCraft Cloud Services, as well as provide cloud-based Disaster Recovery as a Service. The object-based file system delivers universal data access by providing NFS and SMB access to users and applications for primary and secondary storage. StorageCraft said its OneXafe delivers industry-leading recovery time objectives and recovery point objectives, with a patented VirtualBoot feature that provides terabyte virtual machine recovery in less than a second, both on-premises and in the cloud. And, unlike stand-alone backup appliances, OneXafe allows additional nodes to be added to gridlike clusters of backup/secondary storage capacity.

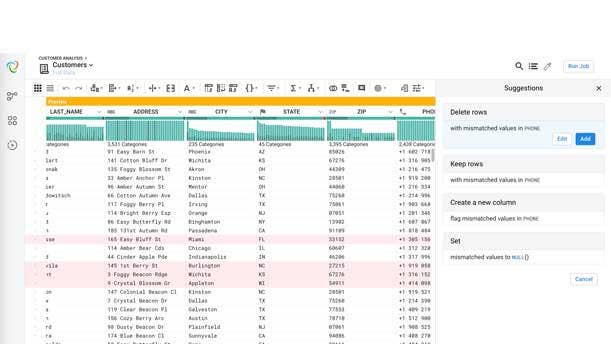

Trifacta Serverless Data Preparation Service On AWS

Based on Amazon EMR, the fully managed Serverless Data Preparation Service from San Francisco-based Trifacta allows businesses to more efficiently access their data and accelerate the process of cleaning data for machine learning and analytics. Companies can deploy Trifacta without having to manage their own infrastructure. The serverless architecture allows customers to dynamically scale computing capacity to match the shifting requirements of different workloads, which in turn increases efficiency and lowers operational costs. Trifacta’s integration with AWS services like the SageMaker managed machine-learning platform and the Redshift cloud data warehouse also allow users to more efficiently prepare data to help expand machine- learning initiatives and accelerate time to insight. The serverless data preparation service is the latest addition to Trifacta’s wide-ranging support for the AWS ecosystem.

Zadara Enterprise Storage For VMware Cloud On AWS

Zadara, an Irvine, Calif.-based provider of fully managed enterprise-grade storage on a pay-as-you-go consumption basis, this week introduced its offering specifically for users running VMware Cloud on AWS. Such users can get advanced data storage and management features attached to their virtual machines running in the VMware Cloud on AWS environment. Zadara uses a combination of industry-standard hardware and its own Zadara software to deliver enterprise-class data storage and management, and works with any data type and any protocol in any location. Zadara is available via public clouds, managed service providers, data centers, co-location partners, and on-premises in customers' data centers.