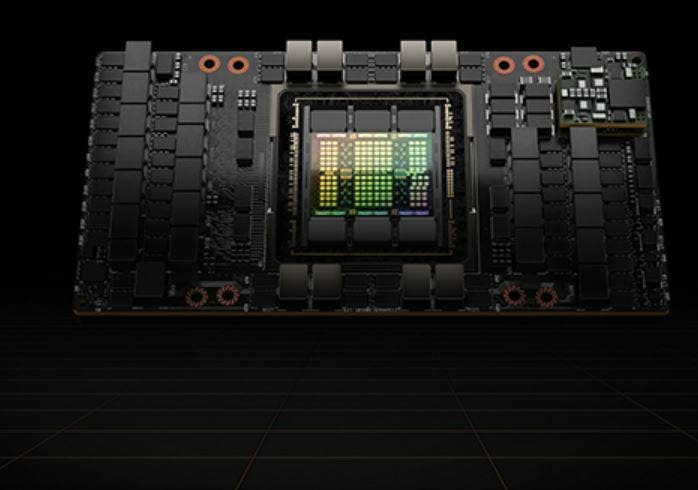

Nvidia H100 GPUs In Full Production, Shipping In October

Nvidia CEO Jensen Huang is announcing its H100 will ship next month, and NeMo, a cloud service for customizing and deploying the inference of giant AI models will debut.

Nvidia revealed Tuesday that its next-generation H100 Tensor Core GPU is now in full production and will ship next month.

“First, we are super excited to announce that the Nvidia H100 is now in full production,” said Ian Buck, vice president and general manager of Nvidia’s Tesla Data Center business, during a media pre-briefing before Nvidia CEO Jensen Huang’s keynote speech Tuesday at the Nvidia GPU Technology Conference (GTC). “Starting with the announcement in the keynote, our customers will be able to get access to the Nvidia H100 in Nvidia’s Launchpad.”

Launchpad is the Santa Clara, Calif.-based company’s “try before you buy” service where users can log in and experience H100 hands on. Shipments will commence in October, he added.

[RELATED STORY: Nvidia Reports ‘Challenging’ Q2 As Data Center, Automotive Drive Sales]

“It’s been a long road and we’re thrilled to have now H100 in full production,” Buck said.

Each system has eight GPUs, and is 3.5 times more energy efficient and provides over three times more total TCO to customers, he noted.

“Our customers are looking to deploy data centers…that are basically AI factories producing AIs for production use cases,” he said. “And we’re very excited to see what H100 is going be doing for those customers delivering more throughput, more capabilities and continue to democratize AI everywhere…”

Earlier this month, Nvidia said that in MLPerf industry-standard AI benchmarks, which test machine-learning models, software and hardware, the H100 delivered up to 4.5 times more performance than previous-generation Nvidia GPUs, setting world records in AI inference performance.

Nvidia’s Huang is also expected to announce NeMo, one of Nvidia’s first cloud services, for customizing and deploying the inference of giant AI models, according to Richard Kerris, general manager for media and entertainment at Nvidia.

“It will democratize access to large language models…” he said. “You don‘t need to train the large language model from scratch. We’ve already done that and made it easier for you.”

There’s also news around the Nvidia’s Omniverse, the open platform for virtual collaboration and simulation, Kerris said.

“One of the biggest announcements that we have here at GTC is the first software and infrastructure as a service (SaaS),” he said.

Nvidia Omniverse Cloud is a comprehensive suite of cloud services for artists, developers, and enterprise teams to design, publish, operate and experience Metaverse applications anywhere.

“In much the same way today that you can experience the Internet on multiple devices, different types of browsers, and everything seamlessly, you be able to interact with 3D environments in much the same way,” Kerris said.

Just as fashion designers, furniture and goods makers and retailers offer 3D products, now it can be done

with augmented reality, he added.

The online GTC’s conference and trainings are set for September 19-22.