50-Plus Biggest AWS Announcements From re:Invent 2020

CRN breaks down more than 50 new product and service announcements from the top-ranked cloud provider’s annual partner and customer conference that continues in January.

Amazon Web Services wrapped the December portion of its virtual re:Invent 2020 conference last week after announcing more than 50 new products and services exemplifying the top-ranked cloud provider’s rapid pace of innovation.

Hybrid cloud offerings were front and center as CEO Andy Jassy unveiled smaller formats of AWS Outposts, additional AWS Local Zones and the extension of AWS container and Kubernetes services to on-premise deployments with Amazon Elastic Container Service (Amazon ECS) Anywhere and Amazon Elastic Kubernetes Service (Amazon EKS).

New products such as Graviton2-powered C6gn instances, larger and faster io2 Block Express volumes in compute/storage and the AWS Proton application management service all are aligned to the evolving application and infrastructure modernization needs of AWS’ enterprise customers, said Krishna Mohan, vice president and global head of the AWS business unit at Tata Consultancy Services (TCS), an AWS Premier Consulting Partner based in Mumbai, India.

“It’s also great to see their approach of integrating AI/ML (artificial intelligence/machine learning) at a foundational level of their product lines, making them intelligent and driving immediate value to enterprise customers,” Mohan said, pointing to the new SageMaker Data Wrangler, Feature Store and Pipelines releases, and Amazon Connect Wisdom. “Andy’s approach of redefining hybrid cloud that caters to both IT and IoT and driving industry solutions is most relevant in driving business transformation across manufacturing, healthcare and aviation industries through HPC (high-performance computing), machine data and computer vision.”

Jassy announced several offerings that won’t be available for up to six to 12 months from now, including the AWS Trainium custom ML chip, Amazon ECS Anywhere and Amazon EKS Anywhere, and the open source project for the new Babelfish for Aurora PostgreSQL , which allows Microsoft SQL Server customers to move to less expensive PostgreSQL databases.

“AWS has normalized the practice of announcing something before it’s ready for release or even preview,” said Jeff Valentine, chief technology officer at CloudCheckr, a cloud management platform provider and AWS Advanced Technology Partner based in Rochester, N.Y. “This could prove to be a very useful strategy, as it forecasts to AWS’ competitors what they are working on, so they spend their resources playing catch-up.”

Here’s a breakdown of AWS’ announcements of new products and services at re:Invent, which continues with 200-plus new sessions on Jan. 12-14.

Habana Gaudi-Based EC2 Instances

In the first half of 2021, AWS plans to release Amazon Elastic Compute Cloud (EC2) instances powered by Gaudi accelerators from Intel’s Habana Labs that are designed specifically for training deep learning models.

“It will use Gaudi accelerators that will provide (up to) 40 percent better price performance than the best-performing GPU instances we have today,” Jassy said. “It will work with all the main machine learning frameworks -- PyTorch as well as Tensor Flow.”

The new EC2 instances are ideal for deep learning training workloads of applications such as natural language processing, object detection and classification, recommendation and personalization, according to AWS.

AWS Trainium

AWS Trainium is a high-performance, ML training chip that AWS custom designed to deliver the most cost-effective training in the cloud, according to Jassy.

“Trainium will be even more cost-effective than the Habana chip,” Jassy said. “It‘ll support all the major frameworks -- TensorFlow and PyTorch and MX Net.”

It shares the same AWS Neuron software development kit as AWS Inferentia, AWS’ first custom-designed, ML inference chip launched at re:Invent 2019.

The AWS Trainium chip is optimized specifically for deep learning training workloads for applications including image classification, semantic search, translation, voice recognition, natural language processing and recommendation engines.

AWS Trainium will be available in the second half of 2021 via Amazon EC2 instances, AWS Deep Learning AMIs and managed services including Amazon SageMaker, Amazon ECS, Amazon EKS and AWS Batch.

Five New EC2 Instances

AWS announced five new Amazon Elastic Compute Cloud (Amazon EC2) instances, including the AWS Graviton2-powered C6gn instance, a compute- and network-heavy instance with up to 100 gigabits per second of network bandwidth.

Graviton2 is AWS’ next generation Arm-based chip designed by AWS and its Annapurna Labs. It gives customers 40 percent better price performance than the most recent generations of the x86 processors from other providers, according to AWS.

“We are not close to being done investing and inventing with Graviton,” Jassy said. “People are seeing a big difference. Graviton is saving people a lot of money.”

New AMD-powered G4ad Graphics Processing Unit instances are designed to improve performance and cut costs for graphics-intensive applications. They feature AMD’s latest Radeon Pro V520 GPUs and second-generation EPYC processors.

New M5zn instances offer the fastest Intel Xeon Scalable processors in the cloud with an all-core turbo frequency of up to 4.5 GHz and up to 45 percent better compute performance per core than current Amazon EC2 M5 instances, according to AWS.

Next-generation, Intel-powered D3/D3en instances offer cost-effective, high-capacity local storage-per-vCPU for massively scaled storage workloads. The next generation of dense HDD storage instances, they provide 30 percent higher processor performance, increased capacity and reduced cost compared to D2 instances, according to AWS. They also provide 2.5x higher networking speed and 45 percent higher disk throughput compared to D2 instances.

New memory-optimized R5b instances are designed to deliver 3x higher performance compared to the same-sized R5 instances for Amazon Elastic Block Store (Amazon EBS) and the fastest block storage performance available for Amazon EC2.

Amazon EC2 Mac Instances

New Amazon EC2 Mac Instances allow Apple developers to natively run macOS in the AWS cloud for the first time to develop, built, test and sign Apple applications for the iPhone, iPad, Mac, Apple Watch, Apple TV and Safari.

Developers can provision and access macOS environments within minutes and dynamically scale capacity as needed while benefiting from AWS’ pay-as-you-go pricing, according to the cloud provider.

The EC2 Mac instances are powered by the AWS Nitro System and are built on Apple Mac mini computers with Intel Core i7 processors. Customers have a choice of both macOS Mojave (10.14) and macOS Catalina (10.15) operating systems, with support for macOS Big Sur (11.0) coming soon.

ECS Anywhere And EKS Anywhere

Amazon ECS Anywhere and Amazon EKS Anywhere were among the new offerings announced by Jassy to make it easier to provision, deploy and manage container applications on premises in customers’ own data centers.

“Amazon ECS Anywhere and Amazon EKS Anywhere allow customers to use ‘easy, AWS-style’ container orchestration in hybrid environments -- a clear shot across the bow to Google’s Anthos GKE (Google Kubernetes Engine),” CloudCheckr’s Valentine said.

While AWS has three container offerings – AWS Fargate in addition to Amazon ECS and Amazon EKS -- customers still have a lot of containers that need to run on premises while they transition to the cloud, Jassy noted.

“People really wanted to have the same management and deployment mechanisms that they have in AWS also on premises, and customers have asked us to work on this,” Jassy said.

Amazon ECS Anywhere allows customers to run Amazon ECS -- a cloud-based, fully managed container orchestration service – to orchestrate containers in their own data centers. Customers get the same AWS-style APIs and cluster configuration management pieces on premises that are available in the cloud. It precludes them from having to run or maintain their own container orchestrators on-premises.

“If you‘re running ECS in AWS, you can run it on premises as well,” Jassy said. “It works with all of your on-premises infrastructure.”

Amazon EKS Anywhere enables customers to run Amazon EKS in their data centers. EKS is a managed service that allows customers to run Kubernetes on AWS without dealing with their own Kubernetes control plane or nodes.

“Just like with ECS Anywhere, EKS Anywhere let you run EKS in your data centers on premises, alongside with what you‘re doing in AWS,” Jassy said.

Amazon EKS Anywhere works on any infrastructure -- bare metal, VMware vSphere or cloud virtual machines -- giving customers consistent Kubernetes management tooling that’s optimized to simplify cluster installation with default configurations for OS, container registry, logging, monitoring, networking and storage, according to AWS. It uses Amazon EKS Distro, the same open-source Kubernetes distribution deployed by Amazon EKS for customers to manually create Kubernetes clusters.

“We‘re going to open source the EKS Kubernetes distribution to you, so you can start using that on premises,” Jassy said. “It’ll be exactly the same as what we do with EKS. We’ll make all the same patches and updates, so you can actually be starting to transition as you get ready for EKS Anywhere.”

Amazon ECS Anywhere and Amazon EKS Anywhere are slated to be available in the first half of 2021.

“Customers will be able to run ECS Anywhere and EKS Anywhere on any customer-managed infrastructure,” Deepak Singh, AWS’ vice president of compute services, said in a statement. “We are still working on the specific infrastructure types we will support at GA (general availability).”

AWS Proton

AWS Proton automates infrastructure provisioning and deployment tooling for developers’ serverless and container-based applications.

“AWS Proton is a big leap forward for customers and for Mission, delivering the first fully managed deployment service for containers and microservices,” said Simon Anderson, CEO at Mission, a Los Angeles-based managed services provider and AWS Premier Consulting Partner.

Jassy called the new application management service a “game changer” for managing the deployment of microservices.

“If you look at containers and serverless, these apps are assembled from a number of much smaller parts that together comprise an application,” Jassy said. “It‘s actually hard. If you look at each of these microservices, they have their own code templates, they have their own CI/CD pipelines, they have their own monitoring, and most are maintained by separate teams. It means that there’s all these changes happening all the time from all these different teams, and it’s quite difficult to coordinate these and keep them consistent. It impacts all sorts of things, including quality and security. There really isn’t anything out there that helps customers manage this deployment challenge in a pervasive way.”

AWS’ team answered that with AWS Proton, which is now in preview. Jassy explained how it works.

“A central platform team or anybody central to an application will build a stack, and a stack is really a file that includes templates that use code to define and configure AWS services using a microservice, including identity and including monitoring,” he said. “It also includes the CI/CD pipeline template that defines the compilation of the code and the testing and the deployment process. And then it also includes a Proton schema that indicates parameters for the developers that they can add -- things like memory allocation or a Docker file…basically everything that‘s needed to deploy a microservice except the actual application coder.”

Then that central platform team will publish the stack to the Proton console. When a developer is ready to deploy their code, they‘ll pick the template that best suits their use case, plug in the parameters they want and hit deploy.

“Proton will do all the rest,” Jassy said. “It provisions the AWS services specified in the stack using the parameters provided. It pushes the code through the CI/CD pipeline that compiles and tests and deploys the code to AWS services, and then sets up all the monitoring and alarms.”

The AWS Proton console lists all the downstream dependencies in a stack, according to Jassy. If an engineering team makes some a change to the stack, they know all the downstream microservices teams that need to make those changes and can alert them and track whether the changes are made, he said.

Amazon Elastic Container Registry Public

Amazon Elastic Container Registry Public (Amazon ECR Public) is designed to provide developers an easy and highly available way to store, manage, share and deploy container software publicly.

Customers can use Amazon ECR Public to host their private and public container images, eliminating the need to use public websites and registries, according to AWS.

“Customers no longer need to operate their own container repositories or worry about scaling the underlying infrastructure and can quickly publish public container images with a single command,” AWS said. “These images are geo-replicated for reliable availability around the world and offer faster downloads to quickly serve up images on-demand.”

A new Amazon ECR Public Gallery website also will allow anyone to browse and search for public container images, view developer-provided details and see pull commands without signing into AWS. Amazon ECR Public will notify customers when a new release of a public image becomes available.

EBS Gp3

AWS announced the launch of Amazon EBS Gp3, its next-generation, general-purpose, solid-state drive volumes for Amazon EBS that allow customers to provision performance independent of storage capacity and provide up to a 20 percent lower per-gigabyte cost than existing EBS Gp2 volumes. Customers can scale IOPS (input/output operations per second) and throughput without provisioning additional block storage capacity.

“The announcement of EBS Gp3 that allows you to select IOPS and throughput independently of volume size will be immediately useful for some of our customers who have pushed the limits of the Gp2 architecture with their data applications,” Mission’s Anderson said.

Data and data stores are being radically reinvented, according to Jassy.

“Analysts say that in every hour today, we‘re creating more data than what we did an entire year 20 years ago, or they predict that in the next three years, there will be more data created than in the prior 30 years combined,” Jassy said. “This is an incredible amount of data growth, and the old tools and the old data stores that existed the last 20 to 30 years are not going to cut it to be able to handle this.”

Block storage is a foundational and pervasive type of storage used in computing, Jassy noted. Unlike object storage, which has metadata that governs the access and the classification of it, block storage has its storage or data split into evenly sized blocks that just have an address and nothing else.

“And since it doesn‘t have that metadata, it means that the reads and the writes and access to them are much faster,” Jassy said. “It’s why people use block storage with virtually every EC2 use case.”

AWS launched Amazon EBS in 2008 as the first high-performance, scalable block store in the cloud.

“It gave you an easy ability to provision what storage you needed and what IOPS you needed and what throughput you needed, and then you could adjust it as you saw fit,” Jassy said.

In 2014, AWS built Gp2, on which the vast majority of EBS workloads run, but customers asked for per-gigabyte costs to be reduced and the ability to sometimes scale throughput, or scale IOPS, without also having to scale the storage with it.

“The baseline performance of these new Gp3 volumes is 3,000 IOPS and 125 megabytes per second, but you can disperse that and scale that up to a peak of 1,000 megabytes per second, which is four times that of Gp2,” Jassy said. “Customers will be able to run many more of their demanding workloads on Gp3s than they even were for Gp2s.”

The 20 percent cost reduction is great news, according to Tim Varma, global director of product management at Syntax, a managed cloud provider for mission-critical enterprise applications and AWS Advanced Consulting Partner based in Montreal. But, Varma said, “We are still hoping for a lower-priced EBS snapshot storage price, but no announcement has been made just yet.”

New io2 Block Express EBS Volumes

Jassy billed new io2 Block Express EBS volumes – now in preview -- as the first storage area network (SAN) for the cloud that’s designed for some of the largest, most demanding deployments of Oracle, SAP HANA, Microsoft SQL Server and SAS Analytics.

AWS’ new io2 Block Express volumes give customers up to 256,000 IOPs, 4,000 megabytes per second throughput and 64 TiB of storage capacity.

“That‘s 4x the dimensions of the io2s on every single one of those,” Jassy said. “That is massive for a single volume. Nobody has anything close to that in the cloud, and what it means is that you now get the performance of SANs in the cloud, but without the headaches around cost management.”

Customers can spin up a Block Express Volume, and AWS will manage it, back it up and replicate it across an availability zone.

“You can use the EBS snapshot capability to auto lifecycle a policy to back it up to S3,” Jassy said. “And if you need more capacity, you just spin up another Block Express volume at a much lower cost than trying to do it at $200,000 a clip.”

That’s the cost that customers can incur when running SANs on premises and maintaining them across multiple data centers, according to Jassy.

AWS will add additional SAN features in 2021, including EBS Multi-Attach and I/O fencing, and will make Amazon EBS Elastic Volumes work with it.

“There was a lot of very complicated and sophisticated and innovative engineering to give you Block Express, but it‘s a huge game-changer for your most demanding applications that you want to run in EBS,” Jassy said.

Amazon Aurora Serverless v2

AWS unveiled the next generation of Amazon Aurora Serverless, the on-demand, auto-scaling configuration for the Amazon Aurora relational database service – AWS’ fastest growing service in its history.

Aurora Serverless v2 scales to hundreds of thousands of transactions in a fraction of a second, according to AWS, delivering up to 90 percent cost savings when compared to provisioning for peak capacity.

“Aurora Serverless v2 totally changes the game for you with serverless as it relates to Aurora,” Jassy said. “You can scale up as big as you need to instantaneously. It only scales you up in the precise increments that you need. It adds in a lot of the Aurora capabilities people wanted: multi AZ and Global Database and read replicas and backtrack and Parallel Query, and it really makes Aurora Serverless v2 ideal for virtually every Aurora workload.”

Amazon Aurora Serverless v2 is available in preview for the MySQL 5.7-compatible edition of Amazon Aurora and will be available for PostgreSQL in the first half of 2021.

Babelfish for PostgreSQL

Babelfish for Amazon Aurora PostgreSQL, now in preview, lets customers run Microsoft SQL Server applications directly on Amazon Aurora PostgreSQL with little to no code changes.

Babelfish provides a new translation layer for Amazon Aurora PostgreSQL that enables Aurora to understand commands from applications written for Microsoft SQL Server. It enables Aurora PostgreSQL to understand T-SQL, Microsoft SQL Server’s proprietary SQL dialect, and supports the same communications protocol, precluding customers from having to rewrite all their applications’ database requests.

“Product enhancements and new rollouts in the enterprise database arena like Babelfish and serverless Aurora will further expand database freedom, providing more choices to enterprise customers on Microsoft SQL Server,” Tata Consultancy’s Mohan said.

Customers had told AWS that they use the AWS Database Migration Service to move their database data, and they use AWS’ Schema Conversion Tool to convert the schema, but there was a third area that was harder than they hoped, according to Jassy.

“And that‘s trying to figure out what to do with the application code that is tied to that proprietary database,” he said. “Customers have asked us, can you do something to make this easier for us, because we want to move these workloads to Aurora -- and especially with the way they’ve watched Microsoft get more punitive and more constrained and more aggressive with their licensing.”

Babelfish is a built-in feature of Amazon Aurora available at no additional cost and can be enabled on an Amazon Aurora cluster with a few clicks in the RDS management console. After migrating their data using AWS Data Migration Services, customers can update their application configuration to point to Amazon Aurora instead of SQL Server and start testing the application running on Amazon Aurora instead of SQL Server. Once customers have tested the application, they no longer need SQL Server and can “shed those expensive and constrained SQL Server licenses,” according to Jassy.

“Because Aurora now is able to understand both T SQL and Postgre, you can write application functionality in Postgre to run side by side with your legacy SQL Server code,” Jassy said.

When AWS shared the news of the offering privately with customers, they were so excited that AWS realized that “this was probably bigger than just Aurora Postgre,” according to Jassy.

“People really wanted the freedom to move away from these proprietary databases and to Postgre,” he said.

AWS plans to open source Babelfish for PostgreSQL to extend the benefits of the Babelfish for Amazon Aurora PostgreSQL translation layer to more organizations. AWS will make it available on GitHub under the permissive Apache 2.0 license in 2021.

“It means that you can modify or tweak or distribute in whatever fashion you see fit,” Jassy said. “All the work and planning is going to be done on GitHub, so you have transparency of what‘s happening with the project. This is a huge enabler for customers to move away from these frustrated, old-guard proprietary databases to the open engines of Postgre.”

Amazon HealthLake

Now in preview, Amazon HealthLake is a HIPAA-eligible service that allows healthcare providers, health insurance companies and pharmaceutical companies to store, transform, query and analyze health data at petabyte scale in minutes.

Amazon HealthLake aggregates an organization’s complete data across various silos and disparate formats into a centralized AWS data lake and automatically normalizes the data using ML. It identifies each piece of clinical information and tags and indexes events in a timeline view with standardized labels, so it can be easily searched. And it structures all the data into the Fast Healthcare Interoperability Resources (FHIR) industry standard format.

Amazon DevOps Guru

Amazon DevOps Guru is a new service that uses ML to identify operational issues long before they impact customers, according to Jassy.

Customers had asked the cloud provider, since it has so much information about the ways in which all kinds of applications operate on AWS, to build a service using ML that allows them to predict when there are going to be operational problems, according to Jassy.

“DevOps Guru makes it much easier for developers to anticipate operational issues before it bites them,” Jassy said. “Using machine learning informed by years of Amazon and AWS operational experience and code…we identify potential issues with missing or misconfigured alarms or resources that are approaching resource limits or code changes that could cause outages or under-provisioned capacity or over-utilization of databases or memory leaks. When we find one of those that we think could lead to a problem, we will notify you either by an SNS (Amazon Simple Notification Service) notification or a CloudWatch event or third-party tools like Slack, and we‘ll also recommend remediation.”

Amazon DevOps Guru will be particularly useful for Mission, according to Anderson, who said his company will look at integrating it into its managed DevOps service automation and workflows.

New Amazon Connect Features

AWS announced five new ML-powered capabilities for Amazon Connect, its cloud-based, customer call center solution introduced in 2017.

Amazon Wisdom, in preview, uses ML to cut the time that agents spend searching for product and service information to solve customer issues in real time. If a customer calls and says a product has “arrived broken,” for example, Wisdom will search all the relevant data repositories for information tied to that issue, and agents will automatically see it in their consoles.

“Wisdom has all these built-in connectors to relevant knowledge repositories -- either your own or to some third-party ones,” Jassy said. “We‘ll start with having connectors to Salesforce and ServiceNow, but there will be more coming.”

Amazon Connect Customer Profiles is now available, giving contact center agents a more unified profile of each customer, so they can use provide more personalized service. It combines customer contact history information from Amazon Connect with customer information from customer relationship management (CRM), e-commerce and order management applications into a unified customer profile that’s displayed in the Amazon Connect agent application when a call or chat starts. AWS customers can use pre-built connectors to third-party applications including Marketo, Salesforce, ServiceNow and Zendesk directly from the Amazon Connect console.

Real-Time Contact Lens for Amazon Connect, also available now, offers a new real-time call analytics capability for contact center managers to detect customer experience issues during live calls. It builds on Contact Lens for Amazon Connect, which was introduced in 2017 to allow contact center managers to analyze recordings of completed customer calls.

Amazon Connect Tasks automates, tracks and manages tasks for contact center agents, improving agent productivity by up to 30 percent, according to AWS. It’s also available now.

Amazon Connect Voice ID, in preview, uses voice analysis to provide real-time caller authentication to make voice interactions faster and more secure, according to AWS.

Contact Center is one of AWS’ fastest growing services, according to Jassy. During the coronavirus pandemic, more than 5,000 new Connect customers have started using the service to spin up call centers remotely to help them deal with their customer service agents now working remotely, he said.

Mission’s Anderson welcomed the new capabilities: “We already use Connect for our contact center, and the natural language query features -- plus easily tying in all service and customer data sources into one real-time view for our customer success and cloud operations teams -- should drive even better customer experiences and operating efficiencies.”

The new developments for call centers and ML stood out as being the most beneficial for Deloitte Consulting customers, according to Mike Kavis, chief cloud architect for Deloitte’s cloud practice.

“Due to COVID, we are seeing a lot of clients asking to reduce costs or totally outsource their call centers,” Kavis said. “In terms of machine learning, many clients are experimenting, but getting to production is hard. Much of the simplification of managing data, models and deployments will help. Also, leveraging machine learning for automated business use cases in areas like cold chain, retail automation, predictive maintenance, call center and others are huge game changers.”

New SageMaker Capabilities

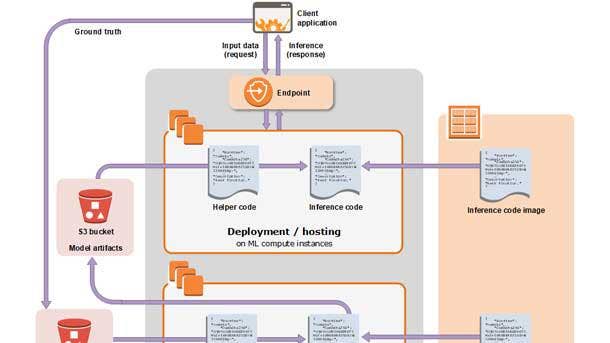

AWS announced a host of new capabilities for Amazon SageMaker, its fully managed ML service that allows data scientists and developers to quickly build and train ML models and deploy them into a production-ready hosted environment.

“Data and AI/ML product line expansions in the SageMaker product line are quite relevant, as the usage of ML grows exponentially,” said Tata Consultancy’s Mohan. “This is an area that can drive the most consumption, because customers will see the immediate and tangible value of cloud platform adoption.”

Amazon SageMaker Data Wrangler reduces the time it takes to aggregate and prepare data for ML from weeks to minutes, according to AWS. Amazon SageMaker Feature Store is a fully managed, purpose-built data store for storing, updating, retrieving and sharing ML features. Amazon SageMaker Pipelines is the first purpose-built, easy-to-use continuous integration and continuous delivery (CI/CD) service for ML, according to AWS.

Amazon SageMaker Clarify provides developers with greater visibility into their training data, so they can detect bias in ML models and understand model predictions. Deep profiling for Amazon SageMaker Debugger enables developers to train their models faster by automatically monitoring system resource utilization and providing alerts for training bottlenecks. Distributed Training on Amazon SageMaker can train large, complex deep learning models up to two times faster than current ML processors, according to AWS.

Amazon SageMaker Edge Manager allows developers to optimize, secure, monitor and maintain ML models deployed on fleets of edge devices such as mobile devices, smart cameras, robots and personal computers. Amazon SageMaker JumpStart helps users bring ML applications to market with a developer portal that includes pre-trained models and pre-built workflows.

Customers are struggling with how to approach AI and ML because the technology is not the value – the outcome or insight is, and use cases drive the adoption, said John Tweardy, chief technology officer and strategic growth leader for the strategy and analytics practice at Deloitte Consulting.

“Technology is a partner on this journey, but the business must own the patterns, models and adoption rate and then, together, change the culture,” Tweardy said. “The multiple releases to SageMaker with Data Wrangler, Pipelines and Feature Store all make the building and maintenance of the models easier and more reusable. This should support faster adoption and speed, and enable data scientists to take on more complex challenges.”

AWS‘ breadth and pace of innovation across the stack continues to impress, and that’s especially evident in what AWS rolled out in its analytics and ML portfolio, said Prat Moghe, CEO of Cazena, a Waltham, Mass.-based AWS Advanced Technology Partner that offers instant cloud data lakes.

“Innovations like Amazon SageMaker Data Wrangler for preparing data for machine learning, AWS Glue Elastic Views, SageMaker Pipelines and beyond are the reason AWS now has more than 100,000 customers using its cloud for machine learning, the reason it has the largest number of data lake instances, etc.,” Moghe said.

AQUA for Amazon Redshift

AQUA (Advanced Query Accelerator) for Amazon Redshift provides a new distributed and hardware-accelerated cache that brings compute to the storage layer for Amazon Redshift, AWS’ cloud data warehouse.

AQUA uses AWS-designed analytics processors that accelerate data compression, encryption and data processing on queries that scan, filter and aggregate large data sets, and it delivers up to 10x faster query performance than other cloud data warehouses, according to AWS.

AQUA is available with the RA3.16XL and RA3.4XL nodes at no additional cost and doesn’t require code changes. Now in preview in AWS’ U.S. East (Ohio), U.S. East (N. Virginia) and U.S. West (Oregon) cloud regions, it’s expected to be generally available in January.

AWS also announced Amazon Redshift ML, also in preview, which allows data warehouse users such as data analysts, database developers and data scientists to create, train and deploy ML models using familiar structured query language (SQL) commands. It works with Amazon SageMaker Autopilot to enable ML algorithms to run on Amazon Redshift data without manually selecting, building or training ML models.

AWS Glue Elastic Views

Another new analytics capability, now in preview, AWS Glue Elastic Views lets developers easily build materialized views that automatically combine and replicate data across multiple data stores, without having to write custom code.

“It lets you write a little bit of SQL to create a virtual table or materialized view of the data that you want to copy and move and combine from one source data store to a target data store,” Jassy said. “Then it manages all the dependencies of those steps as they move. And if something changes in the source data store, Elastic Views takes that and automatically changes it in the target store in seconds. If it turns out, for whatever reason, the data structure changes from one of the data stores, Elastic Views will alert the person that created the materialized view and allow them to adjust that.”

AWS Glue Elastic Views supports AWS databases and data stores, including Amazon DynamoDB, Amazon S3, Amazon Redshift and Amazon Elasticsearch Service, with support for Amazon RDS, Amazon Aurora and others expected in the future. The offering is serverless and automatically scales capacity up or down based on demand.

Amazon Quicksight Q

Launched in 2016, Amazon QuickSight is a scalable, serverless, embeddable, ML-powered business intelligence (BI) service built for the cloud.

At re:Invent, AWS introduced the new Amazon QuickSight Q, a ML-powered capability for Amazon QuickSight that allows users to type questions about business data in natural language and get answers in seconds.

“You don‘t have to know the tables, you don’t have to know the data stores, you don’t have to figure out how to ask the question the right way -- you just ask it the way you speak,” Jassy said. “Q will do auto-filling and spellchecking, so that it makes it even easier to get those queries written.”

Amazon QuickSight Q uses sophisticated deep learning, natural language processing, schema understanding and semantic parsing of SQL code generation, according to Jassy.

“It is not simple,” he said. “However, customers don‘t need to know anything about that machine learning.”

AWS trained the models over millions of data points and different vertical domains, according to Jassy, who said Amazon Quicksight Q would “completely change the BI experience.”

“It‘s pretty amazing how fast machine learning is continuing to evolve,” Jassy said. “And although we have over 100,000 customers who are using our machine learning services -- a lot more than you’ll find anywhere else -- and a much broader array of machine learning services than you’ll find elsewhere, we understand that it is still very early days in machine learning, and we have a lot to invent.”

AWS’ re:Invent messaging on ML was so pervasive that CloudCheckr’s Valentine said he foresees a future where “AWS isn’t viewed as a public cloud company at all and is instead an IT solution provider powered by ML.”

Lambda Pricing Change

Jassy announced that AWS had changed the billing for AWS Lambda, its event-driven, serverless computing platform, effective for December’s billing cycle.

The cloud provider changed the billing increment for Lambda function duration from 100 milliseconds to 1 millisecond, which means customers can save up to 70 percent for some workloads, according to Jassy.

Jonathan LaCour, chief technology officer at Mission, said he was particularly struck by AWS‘ continued investments in driving serverless forward in meaningful ways.

“The move from 100ms to 1ms granularity for billing on Lambda not only will result in immediate cost savings for current Lambda users, it reinforces and strengthens the power that Lambda gives AWS customers from a cost-optimization perspective,” LaCour said. “When you can trace down to the millisecond how much a single function is costing you, that provides a massive incentive to invest in optimizing your hottest functions. As a developer, having such clear financial incentive for refactoring and optimizing code is a breath of fresh air.”

The bigger serverless announcement for LaCour though was AWS enabling developers to build and deploy Lambda functions using existing workflows, technologies and tools used for containerization.

Container Image Support For AWS Lambda

AWS now offers container image support for AWS Lambda, which means customers can build Lambda-based applications using existing container development workflows.

“Now you can package code and dependencies as any Docker container image or any Open Container Initiative-compatible container image or really any third-party base container image or something that AWS has maintained as a base container image,” Jassy said. “It totally changes your ability to deploy Lambda functions, along with the tools that you‘ve invested in on containers, so I think customers are going to find this very handy.”

Users can package and deploy Lambda functions as container images up to 10 gigabytes in size.

AWS has always provided the “absolute best building blocks in the industry,” but has sometimes struggled with developer experience, according to LaCour.

“By enabling developers to leverage container images to build and deploy Lambda functions, they‘ve attacked this problem head on, giving developers a single, familiar approach,” he said.

AWS is seeing big momentum with AWS Lambda, according to Jassy.

“Customers have really loved this event-driven computing model,” he said, noting AWS has added triggers in a lot of it services, so that customers can trigger serverless actions from them. “We have them now in 140 AWS services, which is seven times more than you‘ll find anywhere else. If you look inside Amazon, and you look at all the new applications that were built in 2020, half of them are using Lambda as their compute engine. That’s incredible growth -- hundreds of thousands of customers now are using Lambda.”

Andy Stocchetti, associate director of software development at ServerCentral Turing Group (SCTG), a Chicago-based cloud consultancy, said he was very excited to hear about Lambda container images.

“We already rely heavily on Docker containers for our applications due to the confidence you gain from the build-once-deploy-many mindset,” said Stocchetti, whose company is an AWS Advanced Consulting Partner. “I look forward to that same confidence with Lambda workloads. The addition of the runtime interface emulator means that our teams can develop and test locally. By cutting out the dependency to deploy to the cloud to test, it will allow us to debug and iterate on software projects at a much faster rate.”

New AWS Outposts Formats

AWS unveiled two new smaller formats of AWS Outposts. The fully managed hybrid cloud offering extends AWS infrastructure, services, APIs and tools to virtually any customer data center, co-location space or on-premises facility with compute-and-storage racks built with AWS-designed hardware that are installed and maintained by AWS. The Outposts rack is an industry-standard 42U rack that’s 80 inches tall, 24 inches wide and 48 inches deep.

The new smaller Outposts formats – 1U and 2U rack-mountable servers -- let customers run AWS infrastructure in locations with less space. The 1U size is 1 ¾ inches tall, about the size of a pizza box and 40th the size of the Outposts rack that AWS launched with a year ago. The 2U size is about 3 1/2 inches tall or almost two pizza boxes stacked.

“These two smaller Outpost formats have the same functionalities as Outposts, just for a smaller space,” Jassy said. “Now it means that restaurants or hospitals or retail stores or factories can use Outposts to have AWS distributed to that edge.”

The smaller AWS Outposts form factors will be available in 2021.

“Making Outposts available at smaller form factors will drive its adoption significantly, as it drives native capability of AWS at affordable price points and limited scale,” said Mohan, of Tata Consultancy Services.

New AWS Local Zones

The cloud provider expanded AWS Local Zones to Houston, Boston, and Miami.

Local Zones, announced at last year’s re:Invent, put AWS infrastructure closer to clients in major metropolitan areas who need low latency, but don’t want to provision or maintain datacenter space in those locations. Customers can run AWS compute, storage, database, analytics and ML services, and deliver applications with single-digit millisecond latencies to nearby local end-users.

AWS rolled out its first local zone in Los Angeles that’s aimed at filmmakers, graphics renderers and gaming companies. It plans to add 12 more Local Zones next year in Atlanta, Chicago, Dallas, Denver, Kansas City, Las Vegas, Minneapolis, New York, Philadelphia, Phoenix, Portland and Seattle.

New Industrial ML Services

AWS heralded five new industrial ML services, including Amazon Monitron, an end-to-end solution for industrial equipment monitoring that uses ML to detect abnormal behavior in industrial machinery, enabling customers to implement predictive maintenance and reduce unplanned downtime.

Monitron gives customers sensors and a gateway device to send the data to AWS, which builds custom ML models for them in a mobile app with a user interface, so they can tell what‘s happening, according to Jassy.

“All our customers do is…mount the sensors to your equipment, you start sending the data through the gateway device and then, as we take the data in, we build a machine learning model that looks at what normal looks like for you on sound or vibration,” he said. “Then, as you continue to stream that data to us, we will use the model to show you where there are anomalies and send that back to you in the mobile app, so you can tell where you might need to do predictive maintenance.”

The other four new industrial ML services are Amazon Lookout for Equipment, which gives customers with existing equipment sensors the ability to use AWS ML models to detect abnormal equipment behavior and enable predictive maintenance; AWS Panorama Appliance, which provides a new hardware appliance that allows organizations to add computer vision to their existing on-premises cameras to improve quality control and workplace safety; AWS Panorama Software Development Kit, which allows industrial camera manufacturers to embed computer vision capabilities in new cameras; and Amazon Lookout for Vision, which uses AWS-trained computer vision models on images and video streams to find anomalies and flaws in products or processes.

Deloitte’s Tweardy was particularly excited about what he referred to as the “edge advantage” and the enablement of smart manufacturing.

“This moves AWS technology closer to the business and opens up an entirely new market outside the traditional data center space,” he said. “This marries nicely with Deloitte’s new academic collaboration with Wichita State and our Smart Factory center. Streamlining computation and insights at the edge with AWS Outposts, Wavelength for 5G and connected IoT appliances and predictive ML models will support the continuing IT and OT convergence and need to real-time decisions and automation at the edge. This is timely and relevant and supports a broader set of conversations beyond the IT domain – into supply chain, logistics, business operations and manufacturing.”

IoT Updates

AWS unveiled multiple internet of things (IoT) capabilities.

AWS IoT SiteWise Edge, now in preview, is a managed service that makes it easier to collect, store, organize and monitor data from industrial equipment at scale to help make better data-driven decisions. The software is installed on local hardware such as third-party industrial computers and gateways or on AWS Outposts and AWS Snow Family compute devices.

AWS IoT Core for LoRaWAN is a fully managed feature of AWS IoT Core that allows enterprise to connect wireless devices that use low-power, long-range wide area network (LoRaWAN) technology without developing or operating a LoRaWAN Network Server themselves.

AWS IoT Greengrass 2.0 is a new version of AWS IoT Greengrass , an IoT, open-source edge runtime and cloud service that helps customers build, deploy and manage device software. The connected edge platform now supports additional programming languages, development environments and hardware.

AWS announced the first FreeRTOS Long Term Support release. It gives embedded developers at original equipment manufacturers and microcontroller vendors the predictability and feature stability of long- term support libraries to build applications for microcontroller unit-based devices, with assurance from AWS of security patches and critical bug fixes for two years.

Amazon Sidewalk Integration for AWS IoT Core enables customers to easily onboard their Sidewalk device fleets to AWS IoT Core. Amazon Sidewalk is a shared network that helps devices work better through better connectivity options. It was designed to support a range of customer devices from pet and valuables location trackers, to smart home security and lighting controllers, to remote diagnostics for home appliances and tools.

In preview, Fleet Hub for AWS IoT Device Management enables customers to easily create a fully managed web application to view and interact with their device fleets to monitor device health, respond to alarms, take remote actions and reduce time for troubleshooting.

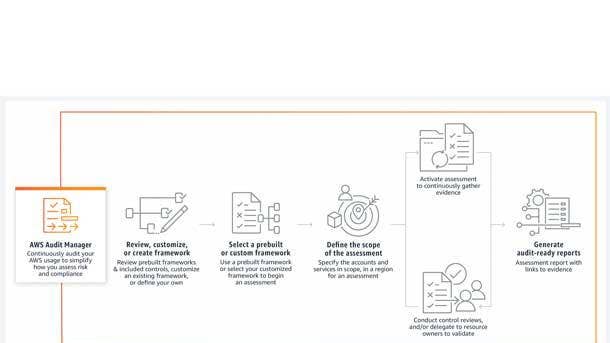

AWS Audit Manager

AWS Audit Manager is a new solution that helps customers continuously audit their AWS usage to simplify risk assessment and compliance with regulations and industry standards such as the CIS AWS Foundations Benchmark, the General Data Protection Regulation (GDPR) and the Payment Card Industry Data Security Standard (PCI DSS).

It automates evidence collection to help assess whether a customer’s policies, procedures and activities are operating effectively. At audit time, AWS Audit Manager helps manage stakeholder reviews of those controls and enables customers to more quickly build audit-ready reports with less manual effort.