The 10 Most Disruptive Technologies Of 2020

These technologies are shaking up the way people communicate, produce goods, accelerate AI models and develop IoT applications, among other things.

Changing The Tech Landscape

Several emerging technologies are beginning to take hold in the IT industry. Among them: 3-D printing, containers for edge computing and robotic process automation.

Some of these technologies, like augmented reality, have gained traction this year because they are solving problems created by the coronavirus pandemic. In the case of AR, the technology is allowing field workers to connect with experts with a visual overlay that makes it easy to understand how a process works. 3-D printing has also come in handy for rapidly producing medical supplies.

[Related: The 10 Coolest AI Chip Startups Of 2020]

But not all of this year’s most disruptive technologies have been related to the pandemic, at least directly. For instance, Arm-based processors have increasingly adopted by major vendors like Amazon Web Services and Apple because they offer new performance advantages over x86 chips. And the need to train increasingly large machine learning models and enable artificial intelligence workloads on smaller devices has driven new innovations in AI accelerator chips.

What follows are the 10 most disruptive technologies of 2020.

3-D Printing

3-D printing technology has proven to be an essential tool in the fight against COVID-19, with its ability to rapidly product medical supplies such as nasal swabs and face shields. The utility of 3-D printers during the pandemic is likely to result in wider deployments in the future as more people become aware of their benefits, from being able to move directly from design to production to having geographically dispersed printers, executives and solution providers told CRN earlier this year. At the end of June, HP Inc. CEO Enrique Lores said the company has “seen a big adoption of 3-D printing to print medical products,” with HP and partners having used its systems to produce more than 2.3 million 3-D printed parts for medical applications year-to-date.

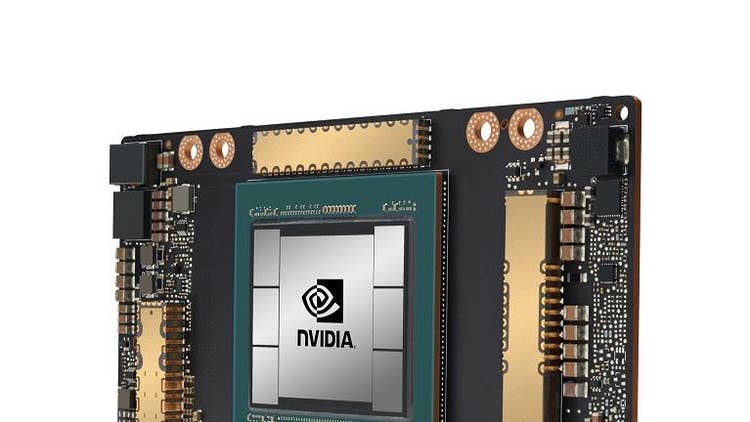

AI Accelerator Chips

New breakthroughs in AI computing were made this year, thanks to new chips from Nvidia, Intel and a handful of startups. In May, Nvidia revealed its new A100 data center GPU, based on its next-generation Ampere architecture, which can accelerate training and inference workloads while also offering the ability to partition itself into as many as seven distinct GPU instances. The company said five DGX A100 systems, each of which include eight A100s, can perform the same amount of training work as 50 previous-generation DGX-1 systems and 600 CPU systems at a tenth of the cost and a twentieth of the power. Near the end of the year, Amazon Web Services launched a new EC2 instance for training deep learning models using Intel’s new Habana Gaudi accelerator, which AWS said could offer up to 40 percent better price-performance than similar cloud instances running Nvidia GPUs. Among the AI chip startups, Graphcore recently said its IPU-M2000 chips can outperform Nvidia’s A100, with some caveats.

Arm-Based Processors

2020 may have marked the beginning of the end of x86 dominance for Intel as major companies like Amazon Web Services, Apple and Microsoft began shifting to using their own in-house silicon for devices. AWS is the farthest along, having launched EC2, C6g and R6g cloud instances running its Arm-based Graviton2 processors, which the company claims can deliver in 40 percent better price-performance over x86-based EC2 C5 instances. In the fall, Apple revealed new Mac computers running its new Arm-based M1 processors, which the company said can outperform competing processors by up to two times. Most recently, Bloomberg reported that Microsoft is working on its own Arm-based chips for Azure servers and Surface PCs. Arm is also enabling the emergence of new standalone semiconductor companies like Ampere Computing, which is led by former Intel executive Renee James and claims its 80-core Altra processor can provide better performance than Intel and AMD CPUs.

Augmented Reality

Augmented reality gained a new level of utility when the coronavirus pandemic hit, thanks in part to the technology’s ability to connect field workers with remote experts to solve problems. One AR vendor, PTC, said it noticed a four-fold increase in usage for its Vuforia Chalk app, which it equated to “FaceTime on steroids,” after the pandemic prompted travel restrictions, social distancing rules and other measures to reduce the spread of COVID-19. Solution provider CBT said utility and manufacturing companies are increasingly interest in remote expert and connected worker solutions, which can result in a return of investment of 75 percent for cost savings and 90 percent for time savings. AR technologies can also enable heads-up displays for readings on IoT-enabled systems as well as step-by-step instructions and documentation for various processes.

Containers For Edge Computing And IoT

For IoT applications to be as nimble and scalable as cloud applications, developers will need to embrace container technology. The good news is, containers are here for edge computing and IoT applications, and they’re poised to shake up how such applications are deployed to the edge while also enabling new kinds of applications. Late last year, Siemens acquired Pixeom’s edge computing platform to make it easier for factory operators to create and manage edge applications. Among the startups pushing container innovation for IoT and edge applications is Nubix, which recently raised funding from investors and has developed a platform that can deploy containers to tiny, low-power chips known as microcontroller units that run on real-time operating systems. Another startup pushing containers for the edge is Zededa, which has built a secure and universal operating system that “decouples software from the diverse landscape of IoT edge hardware to make application development and deployment easier, secure and interoperable,” according to a recent blog post.

New Device Form Factors

While not a specific technology per se, multiple vendors are now starting to push the boundaries for what devices look like and how they function. That is perhaps most evidence in the Lenovo ThinkPad X1 Fold, the first PC to feature a folding OLED display. In a review of the device, CRN’s Kyle Alspach said there are enough downsides to the ThinkPad X1 Fold that would not make it ideal for most users; however, he called it a strong first attempt for a new device form factor that could gain traction for professional users. Other devices making us rethink how mobile PCs look is Microsoft’s Surface Neo, a dual-screen device that comes with a modular keyboard. Among the key innovations making such form factors possible are new computer chips, like Intel’s Lakefield hybrid processors, which use a new 3D packaging technology to create dramatically smaller chips.

Passwordless Authentication

Will passwords go the way of the dinosaurs? Maybe that’s a little too dramatic, but multiple vendors are working towards a future in which passwords are not necessary, at least on a regular basis. Among companies making a push for passwordless security are Microsoft, which is extending passworldless capabilities to Azure Active Directory; and ForgeRock, which expanded its passwordless authentication capabilities to remove usernames as a requirement for logging in. Identity management startup Beyond Identity raised a $75 million funding round from investors in December to make a big push for its passwordless identity platform, which debuted in April.

Robotic Process Automation

Enterprises want better ways to connect legacy systems with modern ones, and robotic process automation, or RPA for short, has become a critical technology for the many instances where APIs are not available. Research firm Gartner views RPA as a key component in the emerging “hyper automation” trend, where multiple machine learning, packaged software and automation tools are used to automate processes and enable a higher volume of advanced analysis. Among the vendors making moves in the RPA space this year are Microsoft, which acquired Softomotive to create and deploy AI bots that automate business workflows; SAP, which launched its 2.0 version of SAP Intelligent Robotic Process Automation that automates repetitive, manual tasks using bots; and UiPath, which hired former VMware Carbon Black executive Thomas Hansen as its new chief revenue officer.

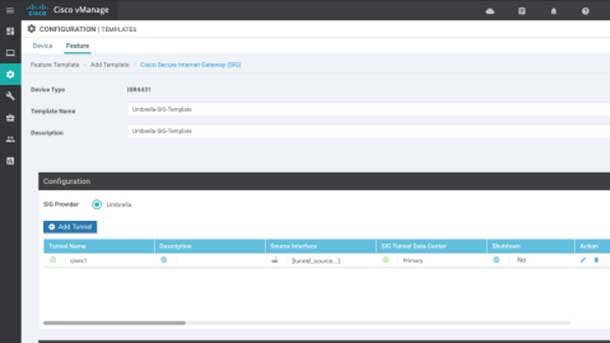

SASE

SASE, which stands for Secure Access Service Edge, is quickly gaining steam in the IT industry, with vendors creating new executive positions and making eight-figure acquisitions to gain a foothold in the fast-growing space. SASE combines wide area networking with network security functions like secure web gateway, cloud access security broker, firewall as a service and zero-trust network access to support the dynamic secure access needs of businesses. SASE tools can identify sensitive data or malware, decrypt content at line speed, and continuously monitor sessions for risk and trust levels. Among the vendors making big moves in SASE this year are Cisco, which expanded capabilities for its SecureX platform; Verse Networks, which launched a new cloud-based offerings that target remote work use cases; and Cato Networks, which raised a $131 million round to expand its SASE platform.

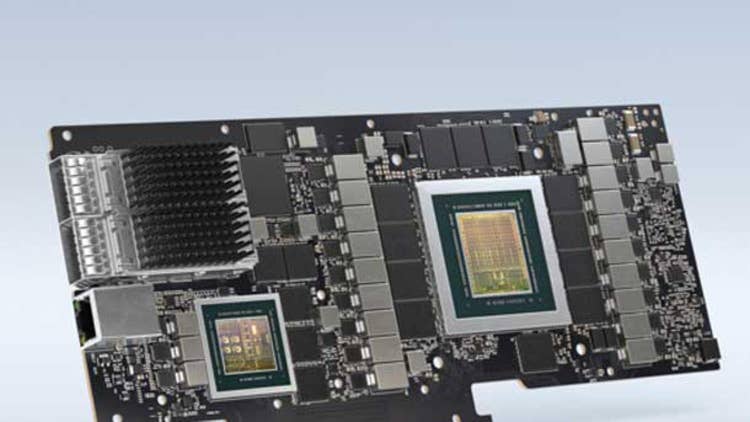

SmartNICs

Smart network interface cards, or SmartNICs for short, are poised to shake up the way data centers are architected, thanks to new investments and products from Intel, Nvidia and other companies. Using technologies gained from its acquisition of Mellanox Technologies, Nvidia introduced in the fall a new family of SmartNIC products it refers to as data processing units, or DPUs, which consist of Mellanox ConnectX-6 SmartNICs, Arm processing cores and, in the case of a few products, Nvidia GPUs. The company said a single BlueField-2 DPU can deliver the same level of performance for software-defined networking, security and storage, plus infrastructure management, that 125 CPU cores can, meaning that CPUs will have headroom to deliver additional performance for enterprise applications. Intel is also pushing out new SmartNICs, including its FPGA-based Intel FPGA SmartNIC C5000X. AMD could also get in on the action with its planned $35 billion acquisition of Xilinx, which also makes FPGA-based SmartNICs. One semiconductor startup, Fungible, said its DPU-based rack solution can help consolidate workloads, increase utilization of storage media and improve footprint as well as dollars per IOPS by at least three times over existing software-defined solutions.